Today we enabled IonMonkey, our newest JavaScript JIT, in Firefox 18. IonMonkey is a huge step forward for our JavaScript performance and our compiler architecture. But also, it’s been a highly focused, year-long project on behalf of the IonMonkey team, and we’re super excited to see it land.

SpiderMonkey has a storied history of just-in-time compilers. Throughout all of them, however, we’ve been missing a key component you’d find in typical production compilers, like for Java or C++. The old TraceMonkey*, and newer JägerMonkey, both had a fairly direct translation from JavaScript to machine code. There was no middle step. There was no way for the compilers to take a step back, look at the translation results, and optimize them further.

IonMonkey provides a brand new architecture that allows us to do just that. It essentially has three steps:

- Translate JavaScript to an intermediate representation (IR).

- Run various algorithms to optimize the IR.

- Translate the final IR to machine code.

We’re excited about this not just for performance and maintainability, but also for making future JavaScript compiler research much easier. It’s now possible to write an optimization algorithm, plug it into the pipeline, and see what it does.

Benchmarks

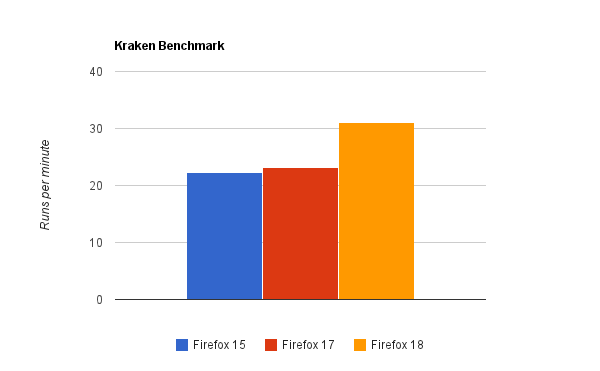

With that said, what exactly does IonMonkey do to our current benchmark scores? IonMonkey is targeted at long-running applications (we fall back to JägerMonkey for very short ones). I ran the Kraken and Google V8 benchmarks on my desktop (a Mac Pro running Windows 7 Professional). On the Kraken benchmark, Firefox 17 runs in 2602ms, whereas Firefox 18 runs in 1921ms, making for roughly a 26% performance improvement. For the graph, I converted these times to runs per minute, so higher is better:

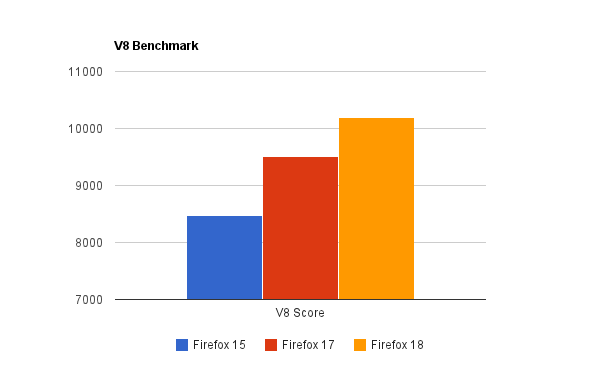

On Google’s V8 benchmark, Firefox 15 gets a score of 8474, and Firefox 17 gets a score of 9511. Firefox 18, however, gets a score of 10188, making it 7% faster than Firefox 17, and 20% faster than Firefox 15.

We still have a long way to go: over the next few months, now with our fancy new architecture in place, we’ll continue to hammer on major benchmarks and real-world applications.

The Team

For us, one of the coolest aspects of IonMonkey is that it was a highly-coordinated team effort. Around June of 2011, we created a somewhat detailed project plan and estimated it would take about a year. We started off with four interns – Andrew Drake, Ryan Pearl, Andy Scheff, and Hannes Verschore – each implementing critical components of the IonMonkey infrastructure, all pieces that still exist in the final codebase.

In late August 2011 we started building out our full-time team, which now includes Jan de Mooij, Nicolas Pierron, Marty Rosenberg, Sean Stangl, Kannan Vijayan, and myself. (I’d also be remiss not mentioning SpiderMonkey alumnus Chris Leary, as well as 2012 summer intern Eric Faust.) For the past year, the team has focused on driving IonMonkey forward, building out the architecture, making sure its design and code quality is the best we can make it, all while improving JavaScript performance.

It’s really rewarding when everyone has the same goals, working together to make the project a success. I’m truly thankful to everyone who has played a part.

Technology

Over the next few weeks, we’ll be blogging about the major IonMonkey components and how they work. In brief, I’d like to highlight the optimization techniques currently present in IonMonkey:

- Loop-Invariant Code Motion (LICM), or moving instructions outside of loops when possible.

- Sparse Global Value Numbering (GVN), a powerful form of redundant code elimination.

- Linear Scan Register Allocation (LSRA), the register allocation scheme used in the HotSpot JVM (and until recently, LLVM).

- Dead Code Elimination (DCE), removing unused instructions.

- Range Analysis; eliminating bounds checks (will be enabled after bug 765119)

Of particular note, I’d like to mention that IonMonkey works on all of our Tier-1 platforms right off the bat. The compiler architecture is abstracted to require minimal replication of code generation across different CPUs. That means the vast majority of the compiler is shared between x86, x86-64, and ARM (the CPU used on most phones and tablets). For the most part, only the core assembler interface must be different. Since all CPUs have different instruction sets – ARM being totally different than x86 – we’re particularly proud of this achievement.

Where and When?

IonMonkey is enabled by default for desktop Firefox 18, which is currently Firefox Nightly. It will be enabled soon for mobile Firefox as well. Firefox 18 becomes Aurora on Oct 8th, and Beta on November 20th.

* Note: TraceMonkey did have an intermediate layer. It was unfortunately very limited. Optimizations had to be performed immediately and the data structure couldn’t handle after-the-fact optimizations.

Jarrod Mosen wrote on

:

wrote on

:

Gianluca wrote on

:

wrote on

:

Ed wrote on

:

wrote on

:

Terrence Cole wrote on

:

wrote on

:

DaveB wrote on

:

wrote on

:

Joe Average wrote on

:

wrote on

:

David Anderson wrote on

:

wrote on

:

Vitaly wrote on

:

wrote on

:

David Anderson wrote on

:

wrote on

:

Ronak Shah wrote on

:

wrote on

:

Will Morgan wrote on

:

wrote on

:

David Anderson wrote on

:

wrote on

:

Tom wrote on

:

wrote on

:

Mr. S wrote on

:

wrote on

:

David Anderson wrote on

:

wrote on

:

wat wrote on

:

wrote on

:

Ferdinand wrote on

:

wrote on

:

Przemysław Lib wrote on

:

wrote on

:

Daniel Johansson wrote on

:

wrote on

:

vlad wrote on

:

wrote on

:

Alaa Salman wrote on

:

wrote on

:

David Anderson wrote on

:

wrote on

:

Matt wrote on

:

wrote on

:

Jan wrote on

:

wrote on

:

herom wrote on

:

wrote on

:

Steve wrote on

:

wrote on

:

David Anderson wrote on

:

wrote on

:

Steve wrote on

:

wrote on

:

dumb wrote on

:

wrote on

:

David Anderson wrote on

:

wrote on

:

dumb wrote on

:

wrote on

:

tom jones wrote on

:

wrote on

:

Yousif Anwar wrote on

:

wrote on

:

Swarnava Sengupta wrote on

:

wrote on

:

Allen Lee wrote on

:

wrote on

:

Petr wrote on

:

wrote on

:

Pushker wrote on

:

wrote on

:

Michaela Merz wrote on

:

wrote on

:

armakuni wrote on

:

wrote on

:

Raven wrote on

:

wrote on

:

Chandan wrote on

:

wrote on

:

atcon wrote on

:

wrote on

:

Sean Halle wrote on

:

wrote on

:

Sean Halle wrote on

:

wrote on

:

jwalden wrote on

:

wrote on

: