In our last post, we found that the number of installed extensions was a good discriminant of heavier users. In this short follow-up, we’ll delve into the survey data associated with the Beta Interface study. Here is a snapshot of some of the research we’ve been conducting.

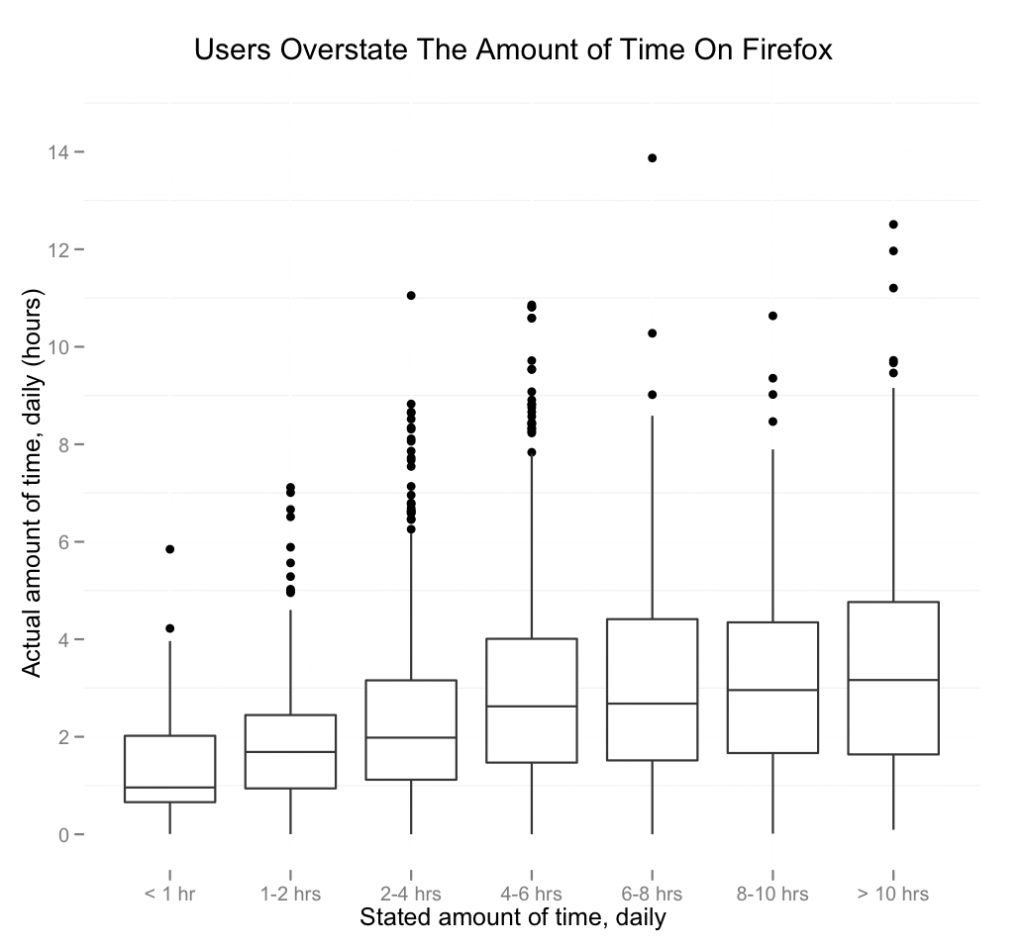

Users overshoot their estimated browsing time

The graph above demonstrates that users tend to simply overestimate how long they use Firefox. Those that typically use the browser less have a more accurate assessment of how long they are browsing. But for users who state a longer browsing time per day, the actual browser usage is lower than their own estimate.

First, a note about the methodology behind this graphic. We estimate the average daily browsing time by aggregating the session lengths of Test Pilot users over the course of the study. Previously we have defined a browser session as a continuous period of user activity in the browser, where successive events are separated by no more than 30 minutes. We subset on the users that state they only use Firefox, to avoid the problem of a different primary browser.

We thought of a few possible explanations as to why, for heavier users, the estimated time is lower than the stated time. Those users might, for instance, be online and using their computers quite a bit during the day, but have integrated their online workflow with their offline ones. Software engineers are a good example of this – we might expect a programmer to be working on a computer all day, leaving the browser open, and using it every once in a while. So there may be the perception of constant browser usage. This certainly rings true from the experience of the Metrics team – we’re on our computers almost all day, with Firefox open, despite working. This is, however, only speculative at this point, since we don’t have data about when users are on their computers.

There are still some obvious methodological issues with this approach: a user might, for instance, use Firefox on a work computer (with test pilot installed), and a different one for home use, which could account for the difference. As such, we hope to include a survey question asking “How much time a day do you spend on this computer?” in the next version of the study. At that point, we can update this research.

Gordon P. Hemsley wrote on

:

wrote on

:

Nathaniel Tucker wrote on

:

wrote on

: