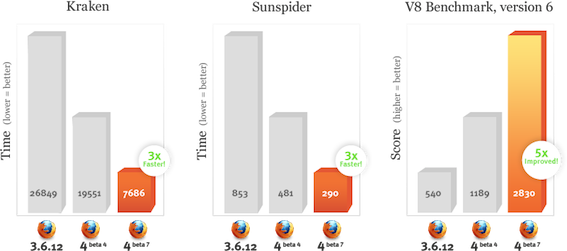

I frequently use the SunSpider and V8 benchmark suites to compare the speed of different versions of TraceMonkey. The best metric for speed comparisons is always execution time. However, measuring execution time on modern machines is unreliable — you get different lots of variation between runs. This is a particular problem in this cae because the run-times of these benchmarks is very small — SunSpider takes less than 700 ms on my laptop, and V8 takes about 6.5 seconds. Run-to-run variations can be larger than the difference I’m trying to measure. This is annoying: the best speed metric cannot be measured exactly.

So I frequently use Cachegrind to measure the number of executed instructions. This is a worse metric than execution time — the number of instructions doesn’t directly relate to the execution time, although it’s usually a good indicator — but it has the advantage that it can be measured exactly. Most of the SunSpider and V8 tests are deterministic, and if I measure them twice in a row I’ll get the same result. Cachegrind also gives instruction counts on a per-function and per-line basis, which is very useful.

So I often run Cachegrind on two different versions of TraceMonkey: an unchanged copy of the current repository tip, and a copy of the current repository tip with a patch applied. I can then compare the results and get a very precise idea of how the patch affects performance.

However, comparing the output of two Cachegrind runs manually is a pain. For example, here is part of Cachegrind’s output (lightly edited for clarity) for crypto-md5.js with an unchanged repository tip (as of a day or two ago):

--------------------------------------------------------------------------------

Ir

--------------------------------------------------------------------------------

48,923,280 PROGRAM TOTALS

--------------------------------------------------------------------------------

Ir file:function

--------------------------------------------------------------------------------

5,638,362 ???:???

4,746,990 /build/buildd/eglibc-2.10.1/string/../sysdeps/i386/i686/strcmp.S:strcmp

2,032,069 jstracer.cpp:js::TraceRecorder::determineSlotType(int*)

1,899,298 jstracer.cpp:bool js::VisitFrameSlots<js::CountSlotsVisitor>(...)

1,759,932 jstracer.cpp:js::TraceRecorder::checkForGlobalObjectReallocation()

1,232,425 jsops.cpp:js_Interpret

885,168 jstracer.cpp:bool js::VisitFrameSlots<js::DetermineTypesVisitor>(...)

871,197 jstracer.cpp:js::TraceRecorder::set(int*, nanojit::LIns*, bool)

812,419 /build/buildd/eglibc-2.10.1/iconv/gconv_conf.c:insert_module

758,034 jstracer.cpp:js::TraceRecorder::monitorRecording(JSOp)

At the top we have the total instruction count, and then we have the instruction counts for the top 10 functions. The ???:??? entry represents code generated by TraceMonkey’s JIT compiler, for which there is no debug information. “Ir” is short for “I-cache reads”, which is equivalent to “instructions executed”.

Cachegrind tracks a lot more than just instruction counts, but I’m only showing them here to keep things simple. It also gives per-line counts, but I’ve omitted them as well.

And here is the corresponding output when a patch from bug 575529 is applied:

--------------------------------------------------------------------------------

Ir

--------------------------------------------------------------------------------

42,332,998 PROGRAM TOTALS

--------------------------------------------------------------------------------

Ir file:function

--------------------------------------------------------------------------------

4,746,990 /build/buildd/eglibc-2.10.1/string/../sysdeps/i386/i686/strcmp.S:strcmp

4,100,366 ???:???

1,687,434 jstracer.cpp:bool js::VisitFrameSlots(js::CountSlotsVisitor&, JSContext*, unsigned int, js::FrameRegsIter&, JSStackFrame*)

1,343,085 jstracer.cpp:js::TraceRecorder::checkForGlobalObjectReallocation()

1,229,853 jsops.cpp:js_Interpret

1,137,981 jstracer.cpp:js::TraceRecorder::determineSlotType(int*)

868,855 jstracer.cpp:js::TraceRecorder::set(int*, nanojit::LIns*, bool)

812,419 /build/buildd/eglibc-2.10.1/iconv/gconv_conf.c:insert_module

755,753 jstracer.cpp:js::TraceRecorder::monitorRecording(JSOp)

575,200 jsscan.cpp:js::TokenStream::getTokenInternal()

It’s easy to see that the total instruction count has dropped from 48.9M to 42.3M, but seeing the changes at a per-function level is more difficult. For a long time I would make this comparison manually by opening the two files side-by-side and reading carefully. Sometimes I’d also do some cutting-and-pasting to reorder entries. The whole process was tedious, but the information revealed is so useful that I did it anyway.

Then three months ago David Baron asked on Mozilla’s dev-platform mailing list if anybody knew of any good differential profiling tools. This prompted me to realise that I wanted exactly such a tool for Cachegrind. Furthermore, as Cachegrind’s author, I was in a good place to understand exactly what was necessary 🙂

The end result is a new script, cg_diff, that can be used to compute the difference between two Cachegrind output files. Here’s part of the difference between the above two versions:

--------------------------------------------------------------------------------

Ir

--------------------------------------------------------------------------------

-6,590,282 PROGRAM TOTALS

--------------------------------------------------------------------------------

Ir file:function

--------------------------------------------------------------------------------

-1,537,996 ???:???

-894,088 jstracer.cpp:js::TraceRecorder::determineSlotType(int*)

-416,847 jstracer.cpp:js::TraceRecorder::checkForGlobalObjectReallocation()

-405,271 jstracer.cpp:bool js::VisitFrameSlots(js::DetermineTypesVisitor&, JSContext*, unsigned int, js::FrameRegsIter&, JSStackFrame*)

-246,047 nanojit/Containers.h:nanojit::StackFilter::read()

-238,121 nanojit/Assembler.cpp:nanojit::Assembler::registerAlloc(nanojit::LIns*, int, int)

230,419 nanojit/LIR.cpp:nanojit::interval::of(nanojit::LIns*)

-226,070 nanojit/Assembler.cpp:nanojit::Assembler::asm_leave_trace(nanojit::LIns*)

-211,864 jstracer.cpp:bool js::VisitFrameSlots(js::CountSlotsVisitor&, JSContext*, unsigned int, js::FrameRegsIter&, JSStackFrame*)

-200,742 nanojit/Assembler.cpp:nanojit::Assembler::findRegFor(nanojit::LIns*, int)

This makes it really easy to see what’s changed. Negative values mean that the instruction count dropped, positive numbers mean that the instruction count increased.

I’ve been using this script for a while now, and it’s really helped me analyse the performance effects of my patches. Indeed, I have some scripts set up so that, with a single command, I can run all of SunSpider through two different versions of TraceMonkey and produce both normal profiles and difference profiles. I can also get high-level instruction comparisons such as the one in this Bugzilla comment.

And now everybody else can use cg_diff too, because I just landed it on the Valgrind trunk. If you want to try it, follow these instructions to setup a copy of the trunk. And note that if you want to compare two versions of a program that sit in different directories (as opposed to profiling a program, modifying it, then reprofiling it) you’ll need to use cg_diff’s –mod-filename option to get useful results. Feel free to ask me questions (via email, IRC or in the comments below) if you have troubles.

Happy differencing!