(“This Week in Glean” is a series of blog posts that the Glean Team at Mozilla is using to try to communicate better about our work. They could be release notes, documentation, hopes, dreams, or whatever: so long as it is inspired by Glean.) All “This Week in Glean” blog posts are listed in the TWiG index).

You’ve heard about this cool Firefox on Glean thing and wish to instrument a part of Firefox Desktop. So you add a metrics.yaml definition for a new Glean metric, commit a piece of instrumentation to mozilla-central, and then Lando lands it for you.

When can you expect the data collected when users’ Firefoxes trigger that instrumentation to show up in a queryable place like BigQuery?

The answer is one of the more common phrases I say when it comes to data: Well, it depends.

In the broadest sense, we’re looking at two days:

- A new Nightly build will be generated within 12h of your commit.

- Users will pick up the new Nightly build fairly quickly after that, and start triggering the instrumentation.

- The following 4am a “metrics” ping containing data from your instrumentation will be submitted (or some time later if Firefox isn’t running at 4am)

- A new schema generated to include your new metric definition will have been deployed overnight

- The following 12am UTC a new partition of our user-facing stable views will have the previous day’s submissions available.

And then you commence querying! Easy as that.

Any questions?

The Questions:

What if I added a new metrics.yaml file?

That file needs to land in gecko-dev (the github mirror of mozilla-central) first. Only then can we (and by “we” I here mean the Data Team, by means of a bug you file) update the data pipeline. Then you get to wait until the next weekday’s midnight UTC (ish) for a schema deploy as per Step 4.

Generally this doesn’t add too much delay, but if landing the file happens after the pipeline folks have gone home, we get to wait until the next weekday’s midnight UTC.

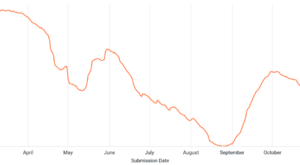

The Nightly population is small and weird. How long until we get data from release?

Uptake of code to release takes a while longer. Code lands in mozilla-central, and gets into the next Nightly within 12h. Getting to Beta from Nightly means waiting until the next merge day (these days that’s on the first Monday of the month, or thereabouts). Getting to Release from Beta means waiting until the merge day after that.

If you’re unlucky, you’ll be waiting over two months for your instrumentation to be in a Release build that users can pull down.

And then you get to wait for enough Release users to update that you’re getting a representative sample. (This could take a week or so.)

So… nine weeks?

That sounds really bad! Is there anything we can do?

Why yes.

The first thing we can do is adjust our expectations. There’s a four-week sway from the worst-case to best-case on this slow path. It isn’t likely that you’ll always be landing instrumentation immediately after a merge day and get to wait the whole month until Nightly merges to Beta.

Your average wait for that is only two weeks. And the best case is a matter of a day or two.

So cross your fingers, and hope your luck is with you.

Secondly, instrumentation is (by itself) very low-risk, so you can “uplift” the instrumentation change directly to Beta without waiting for merge day.

This can cut your route to release down to _two weeks_, by (e.g.) landing in Nightly on Monday Nov 22, verifying that it works on Tuesday, requesting uplift on Wednesday, getting uplifted in the last Beta on Thursday Nov 25, then making the merge from Beta to Release on Dec 6.

(You do still get to wait a third week for the release population to update to the latest version.)

Thirdly, what are the chances that your instrumentation is measuring a feature you just built or just turned on? You want that feature to benefit from the slow-roll exposure to the more tolerant audiences of Nightly and Beta before it reaches Release, right? Automated testing is great, but nothing can simulate the wild variety of use cases and hardware combinations your feature will experience in the Real World.

So what point is there getting your instrumentation into Release before the feature under instrumentation reaches it? Instead of measuring the interval between landing instrumentation and beginning analysis, perhaps measure the interval between the release of the feature you wish to instrument and beginning analysis?

That interval is only a day: gotta wait for that partition in the stable view. Sounds much better, doesn’t it?

Still, can I get data any faster?

The fastest time from Point A) Landing a metric, to Point B) Performing preliminary analysis on a metric, is about 12h:

1) Land your code just before a new Nightly is cut.

2) Hope that the number of Nightly users that update to the latest build over the next twelve hours is enough for your purposes.

If you didn’t luck out and have a schema deploy, you’ll need to dig your data out of the `additional_properties` JSON column. If you are lucky, you can use the friendly columns instead.

To get to the data before the nightly copy-deduplicate to stable views, you’ll be querying the live tables instead. You need to fully-qualify that table name. You need to realize that we haven’t deduped anything in here. And you need to take narrow slices, because we can’t cluster the data effectively here, so querying can get expensive, fast.

Can I get data that quickly from release?

Not yet.

I’ve seen a proposal internally for dynamically-defined metrics which get pushed to running Firefox instances (talk to :esmyth if you’re interested). Though its present form is proposing the process and possibility, not the technology, there’s a version of this I can see that would (for a subset of data collection) take the time from “I wish to instrument this” to “I am performing analysis on data received from (a subset of) the release Firefox population” down to within a business day.

Which is neat! But that speed brings risk, so it’ll take a while to design a system that doesn’t expose our users to that risk.

Don’t expect this for Christmas, is I guess what I mean : )

:chutten

(( This post is a syndicated copy of the original. ))