Are we slim yet?

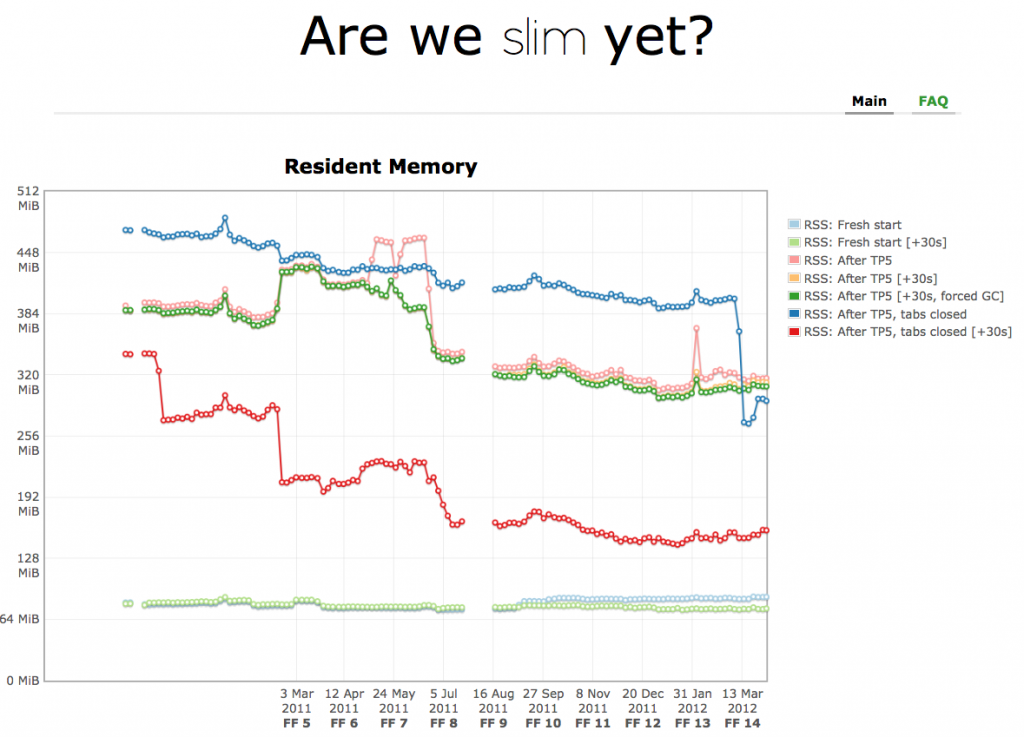

John’s Schoenick’s areweslimyet.com (AWSY) has had its password removed and is now open to the public!

This is a major milestone for the MemShrink project. It shows the progress we have made (MemShrink started in earnest in June 2011) and will let us identify regressions more easily.

John’s done a wonderful job implementing the site. It has lots of functionality: there are multiple graphs, you can zoom in on parts of graphs to see more detail, and you can see revisions, dates and about:memory snapshots for individual runs.

John has also put in a great deal of work refining the methodology to the point where we believe it provides a reasonable facsimile of real-world browsing; please read the FAQ to understand exactly what is being measured. Many thanks also to Dave Hunt and the QA team for their work on the Mozmill Endurance Tests, which are at the core of AWSY’s testing.

Update: Hacker News has reported on this.

Ghost windows

Frequent readers of this blog will be familiar with zombie compartments, which are JavaScript compartments that have leaked, due to defects in Firefox or add-ons. Windows (i.e. window objects) can also be leaked, and often defects that cause compartments leaks will cause window leaks as well.

Justin Lebar has introduced the notion of “ghost windows”. A ghost window is one that meets the following criteria.

- Shows up in about:memory under “window-objects/top(none)”.

- Does not share a domain name with any window under “window-objects/active.

- Has met criteria (1) and (2) for a moderate amount of time (e.g. two minutes).

The basic idea is that a ghost window has a high chance of representing a genuine leak, and this automated identification of suspicious windows will make leak detection simpler. Justin has added ghost window tracking to about:memory, about:compartments, and telemetry. (These three bugs were all marked as MemShrink:P1.) Ghost window tracking is mostly untested right now, but hopefully it will become another powerful tool for finding memory leaks.

Add-ons

We’ve been tracking leaky add-ons in Bugzilla for a while now, but we’ve never had a good product/component to put them in. David Lawrence, Byron Jones, Stormy Peters and I have together created a new “Add-ons” component under the “Tech Evangelism” product. The rationale for putting it under “Tech Evangelism” is that it nicely matches the existing meaning of that phrase — it’s a case where a non-Mozilla entity is writing defective code that interacts with Firefox and hurts users’ experiences with and perceptions of Firefox, and Mozilla can only inform, educate and encourage fixes in that defective code. This component is only intended for certain classes of common defects (such as leaks) that Mozilla contributors are tracking. It is not intended for vanilla add-on bugs; as now, they should be reported through whatever bug-reporting mechanism each add-on uses. I’ve updated the existing open bugs that track leaky add-ons to use this new component.

Leaks in the following add-ons were fixed: Video DownloadHelper (the 2nd most popular add-on on AMO!), Scrapbook Plus, Amazon Price Tracker.

Bug counts

This week’s bug counts:

- P1: 21 (-5/+0)

- P2: 137 (-1/+7)

- P3: 90 (-1/+3)

- Unprioritized: 1 (-1/+1)

Good progress on the P1 bugs!

A new reporting schedule

Many of the weekly MemShrink reports lately have been brief. From now on I plan to write a report every two weeks. This will make things easier for me and will also ensure each report is packed full of interesting things. See you again in two weeks!

36 replies on “MemShrink progress, week 42”

Oh! no.. i was an ardent follower of Memshrink report! but the two weeks could be spicy 😉 . Keep it up!

Great job! Thank you all!

Congrats on getting the site up! Sadly, it’s just saying “An error occured while loading the graph data (./data/areweslimyet.json)” for me…

Nooooooo… I waited the whole week this report to learn that I will now need to wait twice as much from now on to get more awesomeness. D’oh!

I think we all feel a bit like liberforce, or at least I do too.

Thank you all for the excellent work!

This Connection is Untrusted

You have asked Firefox to connect

securely to areweslimyet.com, but we can’t confirm that your connection is secure.

———

Nooooooooo! Fortnightly reports sound dangerously like an excuse to slow momentum. If weekly reports have been brief, time to speed up progress!? On the other hand, maybe everyone’s getting into the really medium/long haul bug fixes?

Aaah, it just gives that error on the old browser I’m stuck with at work. Never mind!

Would it be possible to enhance about:memory way further to track down memory usage down to addon-level?

It’s impossible to get any further information about addons interacting with each other in a mess like this:

604.24 MB (100.0%) — explicit

├──387.55 MB (64.14%) — js

│ ├──161.51 MB (26.73%) — gc-heap-decommitted

│ ├──157.27 MB (26.03%) — compartment([System Principal], 0x8978000)

│ │ ├───88.80 MB (14.70%) — gc-heap

│ │ │ ├──37.12 MB (06.14%) — objects

│ │ │ │ ├──27.32 MB (04.52%) — non-function

│ │ │ │ └───9.80 MB (01.62%) — function

│ │ │ ├──15.59 MB (02.58%) — arena

│ │ │ │ ├──14.78 MB (02.45%) — unused

│ │ │ │ └───0.82 MB (00.14%) — (2 omitted)

│ │ │ ├──14.38 MB (02.38%) — shapes

│ │ │ │ ├───6.74 MB (01.12%) — tree

│ │ │ │ ├───5.51 MB (00.91%) — dict

│ │ │ │ └───2.12 MB (00.35%) — (1 omitted)

│ │ │ ├──12.77 MB (02.11%) — scripts

│ │ │ ├───6.19 MB (01.02%) — strings

│ │ │ └───2.74 MB (00.45%) — (2 omitted)

│ │ ├───29.10 MB (04.82%) — script-data

│ │ ├───14.41 MB (02.39%) — shapes-extra

…

Great job thus far. Your work and the work of snappy is probably the biggest reason why Firefox of today is more awesome than the Firefox of 6 months ago.

I hope that memshrink and snappy be build into the testing process going forward and that all additions to the code is made with those aspects taken into consideration. It really should be a mantra.

As the web mature and feature parity is reach among browsers, performance and stability will once again come to the fore.

My question for the team is this. I am a big player of Facebook Flash games and I have watched the memory usage during play. Not uncommon event to see 1.5gb of memory usage while playing something like Cityville.

Now if you run the following senario, blank tab 55mb usage. Open Cityville on that tab, firefox climbs to 250 mb, plugin container to 800mb. You open another new tab, close cityville tab, Firefox goes to 78mb and plugin container goes to 32mb and stays there. Why is the plugin container not closing?

One of the Toms hardware tests is to see how much memory browsers release. Closing the plug in container when not in use can give you 32mb back.

Just a thought…

From week 35:

It probably didn’t make the February 16 transition to Aurora, but it should be in Aurora since March the 13th.

See [1]. This was blocked forever on a really complex change [2], but maybe we’ll make some progress on it now.

[1] https://bugzilla.mozilla.org/show_bug.cgi?id=501485

[2] https://bugzilla.mozilla.org/show_bug.cgi?id=90268

Congratulations on getting areweslimyet.com out the door, so to speak… 🙂

Just one minor thing – is there a way to zoom out of the graphs once I’ve zoomed into them?

Not that I know of. Just reload the page 🙂

click zoom out in the top right corner

Just a small nit: Those abbeviations on the x-axis should be Fx, not FF

@Leak: There is a zoom out link at the top-right of the graph.

The heap-unclassified numbers after TP5 seem to be pretty high on Mac. Hopefully, more accurate reporters will be coming soon to bring them down.

I’ve been doing some experimenting on behavior that would seem to be a bit more difficult to track than the usual methods. Since I’ve moved to using the Nightly build for almost 100% of my browsing, I can leave another version (currently the release version) open and watch how it behaves when not interacting with anything else at all.

Basically, I open the browser up to some test page (mainly using front page of Ars Technica), and then don’t touch the browser at all for a few days; just check on what’s going on in memory periodically. I find that there tends to be a small gradual buildup of memory used if it’s run in safe mode (most of which is released if you run GC from about:memory), and a large gradual buildup of memory used when in normal mode with my addons (which is mostly not released if you run GC from about:memory).

One run increased from ~115 MB at start to 650 MB after 3 days of no use whatsoever. (note: those numbers were from Win7’s Resource Manager, and counting Private Bytes only, to avoid any potential memory/libraries shared with other instances of the browser)

Obviously this is difficult to test since it takes several days per sample, however it seems from an initial view to be an indicator of a common, annoying behavior issue — of memory buildup over time with no explicitly understandable cause (though clearly tied to addons rather than the browser itself, for the most part).

The Are We Slim Yet testing looks fine for finding localized and immediate issues, but its window of “long-term” events is only about 30 seconds. My 115 > 650 MB sample would only grow by ~0.06 MB in 30 seconds (assuming it was linear across the entire run), which at the noise level in AWSY’s tests.

For testing addon leaks, is consideration given to what happens over very long periods of time? What they’re doing that may seem inconsequential in a short test, but may be problematic after several days?

David

One reaeon for your steadily growing memory usage could be RSS subscriptions in your bookmarks or toolbars. I reported this as bug 692748 which is sitting at P2 apparently waiting for improvements to about:memory

You could try to do some before and after comparison of the about:memory page, just to see where the memory disappear into. It might however just be going into heap-unclassified, which doesn’t really help all that much in determining why.

Slow leaks are definitely interesting, but they’re also really hard to find, test and diagnose. There are no easy answers there.

If you can selectively disable add-ons and identify one (or multiple) add-ons that are responsible for most of the excess memory build-up, that would be very useful. If you can see anything suspicious in about:memory at the same time, that would be even better!

awsy doesn’t match the memory usage I’m seeing on a daily basis.

Memory usage is highly dependent on what pages you’re loading and how you’re browsing. No benchmark is going to match everyone’s usage, but the hope is that it emulates enough of a real-world scenario that it can keep us from regressing memory usage in the average case.

Every Memshrink post, I always see leaks being plugged in addons. It’s like the memshrink team in addition to tackling the main firefox code is fighting a thousand small battles with the addons.

It’s the AMO reviewers who deserve the credit here. The AMO review policy was changed so that leaks are now explicitly looked for, and they’re finding plenty!

AMO reviewers did not look for leaks until recently? Oh joy of joys, the story of how poorly Mozilla has managed Firefox for many years just gets increasingly more amusing/dire.

A potted history of Mozilla’s approach to developing an efficient modern browser:

1) Open the majority of the browser’s innards to extension authors with no consideration for memory/performance impact

2) Deny there is any memory or performance problem. Or “it’s not a bug, it’s a feature” if I remember Ben Goodger’s blog entry correctly

3) Break the browser’s memory management (garbage collection in particular) whilst throwing in every HTML5 feature (whether the standard has settled or not) but the kitchen sink when developing version 4

4) Do nothing about the problem until competition (Chrome) knocks their socks off

5) Lose (or fail to gain) huge chunks of market share getting back to pre-version 4 memory performance 9 months after starting to take the memory usage/performance issue seriously (MemShirnk)

6) Start developing new add-on APIs (JetPack) which actually have some small concept of memory management and limiting the ability of authors to take over each and every one of the browser’s functions

7) Two years after competition (Chrome) kicks their caboose finally start addressing UI performance issues (Snappy) instead of merely copying the competition’s (Chrome) UI left, right and centre.

8) Belatedly and slowly fix various memory leak issues with the new add-on API (JetPack) itself (not add-ons, the bloody underlying API itself!) …

9) Realise that the very frankenstein (Web 2/HTML5) they pimp vociferously actually doesn’t work very well in their own browser

10) Eight years into the product’s lifespan, decide that reviewing add-ons for memory leaks might just be a nifty idea instead of blaming add-on developers for breaking the product

Hindsight is bliss but the above sure seems like one way history can be interpreted and it’s not pretty! Undoubtedly I’ve forgotten some ‘highlights’ as well.

All power to MemShrink, Snappy, hard-working AMO reviewers, the Jetpack team and others. At least Mozilla seems to be on a good track (despite the ongoing cloning of the Chrome UI) through focusing on the underlying core problems of Firefox. The above should be taken with the old maxim that if we don’t learn from mistakes, we’re bound to repeat them, in mind.

Long live the ‘fox and all who contribute to creating him.

Lurv that super-fluffy tail!

pd: I’m sick of your whinging rants. You’ve been polluting my blog and countless other Mozilla blogs with them for too long. I’ve had a silent policy for a couple of months to not respond to your comments, but that hasn’t made any difference. From now on I’m going to delete comments of yours that I decide are unhelpful. Given your track record, that will be most of them.

Hmm, I touched a nerve! I guess you could take this post in more than one way. Seems you’ve taken it poorly. That’s a shame because although you don’t seem to give me the credit, amongst my critical posts, I think there’s been a lot of support for your work. In fact I have tended to have a policy of encouragement at the end of my posts regardless of how critical they may be.

Regardless, those who do not learn from the past are doomed to repeat it. There’s a clear lack of public analysis from, or of, Mozilla’s track record WRT to snappy/perf/memshrink (call it what you like). It’s rather delusional to expect to get all positive and fluffy feedback from your posts. This particular post of mine was more colourful than scientific and dispassionate. Well forgive me for having passion for Mozilla that might not agree with your particular set of politically correct guidelines!

Perhaps I should adopt the “meh, Firefox? Switched to Chrome years ago” slashdot troll style of commentary?

FWIW, I don’t seem to get many people replying to my comments in disagreement. Have a think about that!

AWSY looks really interesting. One thing that worries me about all memory statistics last few years is that Firefox 3.6 and before is permanently ignored and unmeasured. That while 3.0, 3.5 and 3.6 where the versions that inspired the memshrink project, and are often cited as the ‘good old days’. Is it that hard to include these older versions?

TBH even at the release of FF 4, nobody wanted to make a comparison. Only stats ever poster online where FF4 vs latest trunk, for me this has also been a split up in FF’s memory usage pattern.

The older versions are harder to measure. John had to jump through a few hoops to get measurements back as far as he did. I once tried measuring 3.6 on MemBench (http://gregor-wagner.com/tmp/mem) and it crashed halfway through 🙁

Is there some way an end user can get a copy of the test sites used in the tp5 benchmark (or can I request additional older Fx builds are run through the tests)? Basically I just want to run some historical comparisons to plot Firefox’s changing memory usage since version 1.0, plus graph the speed differences on a few benchmarks. People seem to have forgotten that 1.0 really wasn’t much faster than Internet Explorer 6.

You’ll have to ask John Schoenick about the Tp5 sites.

Note that the older the version is, the less fair the comparison is, because modern pages will use lots of features that the older browsers don’t support.

An interesting comparison of JS performance is shown here:

http://www.page.ca/~wes/SpiderMonkey/Perf/sunspider_history.png

Here’s how the SpiderMonkey versions correlate to Firefox versions:

1.5 == FF 1

1.6 == FF 1.5

1.7 == FF 2

1.8 == FF 3

1.8.1 == FF 3.5

1.8.2 == FF3.6

1.8.5 == FF4

Sunspider is a crappy benchmark in a lot of ways, but it’s still an interesting graph 🙂 And performance is even better now than it was in FF4.

Performance of JS seems to increasingly becoming a scale issue rather than a speed issue. I’m not an expert by any stretch of the imagination however whilst worker threads might be helping make computationally extreme tasks workable in JS, the more the word gets out about what JS can do, the more people use it and browsers in turn choke. As an example, if using gmail and one google docs, what chance a third JS-heavy page will performance in a zippy manner? If that other page contains video, the odds become worse. Perhaps this is all a Snappy issue but it seems like a time-honoured issue with browsers. We’re all told to ‘bet on JS’ however nobody suggests where a reasonable ceiling might be. Nobody discusses JS scaling. FWIW I’m not suggesting there is a great alternative. There may be but I wouldn’t know. I”m just wondering if a more balanced view of JavaScript’s capabilities is needed? I’m a webdev and I don’t know of *any* tools or guides that address this question.

I’ve noticed that heap-unclassified seems to go up quite a bit for every HTML5 YouTube video open. Closing the videos does seem to release it again (not entirely positive though), so I’m guessing this is the result of a missing memory reporter somewhere? Interestingly, it seems to be related to the player size (changing player size from small to large or vice versa results in heap-unclassified => 35,721,898 B (22.57%) -> 59,855,742 B (32.83%)).

Should I file a bug for this? Congrats on all the progress made so far BTW, you’ve come a long way.

Please do file a bug, and CC me. Clear steps to reproduce would be really helpful, too. Thanks!

Bug has been filed: https://bugzilla.mozilla.org/show_bug.cgi?id=744733