(“This Week in Glean” is a series of blog posts that the Glean Team at Mozilla is using to try to communicate better about our work. They could be release notes, documentation, hopes, dreams, or whatever: so long as it is inspired by Glean. You can find an index of all TWiG posts online.)

One of my favorite tasks that comes up in my day to day adventure at Mozilla is a chance to work with the data collected by this amazing Glean thing my team has developed. This chance often arises when an engineer needs to verify something, or a product manager needs a quick question answered. I am not a data scientist (and I always include that caveat when I provide a peek into the data), but I do understand how the data is collected, ingested, and organized and I can often guide people to the correct tools and techniques to find what they are looking for.

In this regard, I often encounter challenges in trying to read or analyze data that is related to another common task I find myself doing: advising engineering teams on how we intend Glean to be used and what metric types would best suit their needs. A recent example of this was a quick Q&A for a group of mobile engineers who all had similar questions. My teammate chutten and I were asked to explain the differences between Counter Metrics and Event Metrics, and try and help them understand the situations where each of them were the most appropriate to use. It was a great session and I felt like the group came away with some deeper understanding of the Glean principles. But, after thinking about it afterwards, I realized that we do a lot of hand-wavy things when explaining why not to do things. Even in our documentation, we aren’t very specific about the overhead of things like Event Metrics. For example, from the Glean documentation section regarding “Choosing a Metric Type” in a warning about events:

“Important: events are the most expensive metric type to record, transmit, store and analyze, so they should be used sparingly, and only when none of the other metric types are sufficient for answering your question.”

This is sufficiently scary to make me think twice about using events! But what exactly do we mean by “they are the most expensive”? What about recording, transmitting, storing, and analyzing makes them “expensive”? Well, that’s what I hope to dive into a little deeper with some real numbers and examples, rather than using scary hand-wavy words like “expensive” and “should be used sparingly”. I’ll mostly be focusing on events here, since they contain the “scariest” warning. So, without further ado, let’s take a look at some real comparisons between metric types, and what challenges someone looking at that data may encounter when trying to answer questions about it or with it.

Our claim is that events are expensive to record, store and transmit; so let’s start by examining that a little closer. The primary API surface for the Event Metric Type in Glean is the record() function. This function also takes an optional collection of “extra” information in a key-value shape, which is supposed to be used to record additional state that is important to the event. The “extras”, along with the category, name, and (relative) timestamp, makes up the data that gets recorded, stored, and eventually transmitted to the ingestion pipeline for storage in the data warehouse.

Since Glean is built with Rust and then provides SDKs in various target languages, one of the first things we have to do is serialize the data from the shiny target language object that Glean generates into something we can pass into the Rust that is at the heart of Glean. It is worth noting that the Glean JavaScript SDK does this a little differently, but the same ideas should apply about events. A similar structure is used to store the data and then transmit it to the telemetry endpoint when the Events Ping is assembled. A real-world example of what this serialized event, coming from Fenix’s “Entered URL” event would look like this JSON:

{

"category": "events",

"extra": {

"autocomplete": "false"

},

"name": "entered_url",

"timestamp": 33191

}

A similar amount of data would be generated every time the metric was recorded, stored and transmitted. So, if the user entered in 10 URLs, then we would record this same thing 10 times, each with a different relative timestamp. To take a quick look at how this affects using this data for analysis: if I only needed to know how many users interacted with this feature and how often, I would have to count each event with this category and name for every user. To complicate the analysis a bit further, Glean doesn’t transmit events one at a time, it collects all events during a “session” (or if it hits 500 events recorded) and transmits them as an array within an Event Ping. This Event Ping then becomes a single row in the data, and nested in a column we find the array of events. In order to even count the events, I would need to “unnest” them and flatten out the data. This involves cross joining each event in the array back to the parent ping record in order to even get at the category, name, timestamp and extras. We end up with some SQL that looks like this (WARNING: this is just an example. Don’t try this, it could be expensive and shouldn’t work because I left out the filter on the submission date):

SELECT *

FROM fenix

CROSS JOIN UNNEST (events) AS event

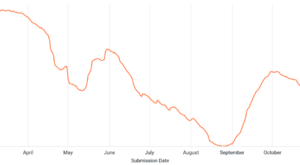

For an average day in Fenix we see 75-80 million Event Pings from clients on our release version, with an average of a little over 8 events per ping. That adds up to over 600 million events per day, and just for Fenix! So when we do this little bit of SQL flattening of the data structure, we end up manipulating over a half a billion records for a single day, and that adds up really quickly if you start looking at more than one day at a time. This can take a lot of computer horsepower, both in processing the query and in trying to display the results in some visual representation. Now that I have the events flattened out, I can finally filter for the category and name of the event I am looking for and count how many of that specific event is present. Using the Fenix event “entered_url” from above, I end up with something like this to count the number of clients and events:

SELECT

COUNT(DISTINCT client_info.client_id) AS client_count,

COUNT(*) AS event_count,

DATE(submission_timestamp) AS event_date

FROM

fenix.events

CROSS JOIN

UNNEST(events.events) AS event -- Yup, event.events, naming=hard

WHERE

submission_timestamp >= ‘2021-08-12’

AND event.category = ‘events’

AND event.name = ‘entered_url’

GROUP BY

event_date

ORDER BY

event_date

Our query engine is pretty good, this only takes about 8 seconds to process and it has narrowed down the data it needs to scan to a paltry 150 GB, but this is a very simple analysis of the data involved. I didn’t even dig into the “extra” information, which would require yet another level of flattening through UNNESTing the “extras” array that they are stored in in each individual event.

As you can see, this explodes pretty quickly into some big datasets for just counting things. Don’t get me wrong, this is all very useful if you need to know the sequence of events that led the client to entering a URL, that’s what events are for after all. To be fair, our lovely Data Engineering folks have taken the time and trouble to create views where these events are already unnested, and so I could have avoided doing it manually and instead use the automatically flattened dataset. I wanted to better illustrate the additional complexity that goes on downstream from events and working with the “raw” data seemed the best way to do this.

If we really just need to know how many clients interact with a feature and how often, then a much lighter weight alternative recommended by the Glean team would be a Counter Metric. To return to what the data representation of this looks like, we can look at an internal Glean metric that counts the number of times Fenix enters the foreground per day (since the metrics ping is sent once per day). It looks like this:

"counter": {

"glean.validation.foreground_count": 1

}

No matter how many times we add() to this metric, it will always take up that same amount of space right there, only the value would change. So, we don’t end up with one record per event, but a single value that represents the count of the interactions. When I go to query this and find out how many clients this involved and how many times the app moved to the foreground of the device, I can do something like this in SQL (without all the UNNESTing):

SELECT

COUNT(DISTINCT client_info.client_id) AS client_count,

SUM(m.metrics.counter.glean_validation_foreground_count) AS foreground_count,

DATE(submission_timestamp) AS event_date

FROM

org_mozilla_firefox.metrics AS m

WHERE

submission_timestamp >= '2021-08-12'

GROUP BY

event_date

ORDER BY

event_date

This runs in just under 7 seconds, but the query only has to scan about 5 GB of data instead of the 150 GB we saw with the event. And, for comparison, there were only about 8 million of those entered_url events per day compared to 80 million foreground occurrences per day. Even with many more incidents, the amount of data scanned by the query that used the Counter Metric Type to count things scanned 1/30th the amount of data. It is also fairly obvious which query is easier to understand. The foreground count is just a numeric counter value stored in a single row in the database along with all of the other metrics that are collected and sent on the daily metrics ping, and it ultimately results in selecting a single column value. Rather than having to unnest arrays and then counting them, I can simply SUM the values stored in the column for the counter to get my result.

Events do serve a beautiful purpose, like building an onboarding funnel to determine how well we retain users and what onboarding path results in that. We can’t do that with counters because they don’t have the richness to be able to show the flow of interactions through the app. Counters also serve a purpose, and can answer questions about the usage of a feature with very little overhead. I just hope that as you read this, you will consider what questions you need to answer and remember that there is probably a well-suited Glean Metric Type just for your purpose, and if there isn’t, you can always request a new metric type! The Glean Team wants you to get the most out of your data while being true to our lean data practices, and we are always available to discuss which metric type is right for your situation if you have any questions.