MemShrink:P1 Bugs fixed

Terrence Cole made a change that allows unused arenas in the JS heap to be decommitted, which means they more or less don’t cost anything. This helps reduce the cost of JS heap fragmentation, which is a good short-term step while we are waiting for a compacting garbage collector to be implemented. Terrence followed it up by making the JS garbage collector do more aggressive collections when many decommitted arenas are present.

Justin Lebar enabled jemalloc on MacOS 10.7. This means that jemalloc is finally used on all supported versions of our major OSes: Windows, Mac, Linux and Android. Having a common heap allocator across these platforms is great for consistency of testing and behaviour, and makes future improvements involving jemalloc easier.

Gabor Krizsanits created a new API in the add-on SDK that allows multiple sandboxes to be put into the same JS compartment.

Other Bugs Fixed

I registered jemalloc with SQLite’s pluggable allocator interface. This had two benefits. First, it means that SQLite no longer needs to store the size of each allocation next to the allocation itself, avoiding some clownshoes allocations that wasted space. This reduces SQLite’s total memory usage by a few percent. Second, it makes the SQLite numbers in about:memory 100% accurate; previously SQLite was under-reporting its memory usage, sometimes significantly.

Relatedly, Marco Bonardo made three changes (here, here and here) that reduce the amount of memory used by the Places database.

Peter Van der Beken fixed a cycle collector leak.

I tweaked the JavaScript type inference memory reporters to provide better coverage.

Jiten increased the amount of stuff that is released on memory pressure events, which are triggered when Firefox on Android moves to the background.

Finally, I created a meta-bug for tracking add-ons that are known to have memory leaks.

Bug Counts

I accidentally deleted my record of the live bugs from last week, so I don’t have the +/- numbers for each priority this week.

- P1: 29 (last week: 35)

- P2: 126 (last week: 116)

- P3: 59 (last week: 55)

- Unprioritized: 0 (last week: 5)

The P1 result was great this week — six fewer than last week. Three of those were fixed, and three of those I downgraded to P2 because they’d been partially addressed.

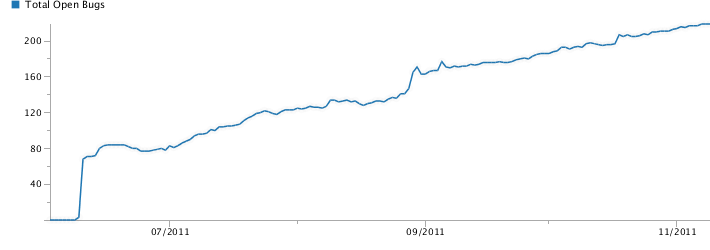

For a longer view of things, here is a graph showing the MemShrink bug count since the project started in early June.

There was an early spike as many existing bugs were tagged with “MemShrink”, and a smaller spike in the middle when Marco Castellucio tagged a big pile of older bugs. Other than that, the count has marched steadily upward at the rate of about six per week. Many bugs are being fixed and definite improvements are being made, but this upward trend has been concerning me.

Future Directions

So in today’s MemShrink meeting we spent some time discussing future directions of MemShrink. Should we continue as is? Should we change our focus, e.g. by concentrating more on mobile, or setting some specific targets?

The discussion was long and wide-ranging and not easy to summarize. One topic was “what is the purpose of MemShrink?” The point being that memory usage is really a secondary measure. By and large, people don’t really care how many MB of memory Firefox is using; they care how responsive it is, and it’s just assumed that reducing memory usage will help with that. With that in mind, I’ll attempt to paraphrase and extrapolate some goals (apologies if I’ve misrepresented people’s opinions).

- On 64-bit desktop, the primary goal is that Firefox’s performance should not degrade after using it heavily (e.g. many tabs) for a long time. This means it shouldn’t page excessively, and that operations like garbage collection and cycle collection shouldn’t get slower and slower.

- On mobile, the primary goal probably is to reduce actual memory usage. This is because usage on mobile tends to be lighter (e.g. not many tabs) so the longer term issues are less important. However, Firefox will typically be killed by the OS if it takes up too much memory.

- On 32-bit desktop, both goals are relevant.

As for how these goals would change our process, it’s not entirely clear. For desktop, it would be great to have a benchmark that simulates a lot of browsing (opening and closing many sites and interacting with them in non-trivial ways). At the end we could measure various things, such a memory usage, garbage and cycle collection time, and we could set targets to reduce those. For mobile, the current MemShrink process probably doesn’t need to change that much, though more profiling on mobile devices would be good.

Personally, I’ve been spreading myself thinly over a lot of MemShrink bugs. In particular, I try to push them along and not let them stall by doing things like trying to reproduce them, asking questions, etc. I’ve been feeling lately like it would be a better use of my time to do less of that and instead dig deeply into a particular area. I thought about working on making JS script compilation lazy, but now I’ve decided instead to focus primarily on improving the measurements in about:memory, in particular, reducing the size of “heap-unclassified” by improving existing memory reporters and adding new ones. I’ve decided this because it’s an area where I have expertise, clear ideas on how to make progress, and tools to help me. Plus it’s important; we can’t make improvements without measurements, and about:memory is the best memory measurement tool we have. Hopefully other people agree that this is important to work on 🙂

45 replies on “MemShrink progress, week 21”

Improving about:memory coverage is absolutely important to work on, and I agree that you’re in a really good position to do it!

I used to see Firefox go to 800MB memory usage and never go below that. These days it hovers over 400MB and clearly goes down when I close a tab. And I love that.

But increasingly I feel myself being drawn to the responsiveness of Chrome and Opera. Just moving a tab on the tabbar moves choppy whereas Chrome and Opera remain smooth. Opening multiple background tabs slows Firefox so much down that after 5 tabs I have to wait for Firefox to process them before going on. I feel like these should just be fast.

I love the memshrink project and would like it to continue but you have to have a similar project for responsiveness. The fastresponse project should also have a weekly report like yours.

There is a sort of memory wall when active resources (mostly the constantly garbage-collected js heap, large or frequently rendered graphics) start to fill up the ram and get swapped in and out to the disk. That kills responsiveness.

“By and large, people don’t really care how many MB of memory Firefox is using; they care how responsive it is, and it’s just assumed that reducing memory usage will help with that.”

I think that is the fundamental problem. The above assumption is partly false.

You are correct that most people dont read memory usage. They dont care or they dont know. But Media do, Enthusiast do, Blogger do, Geeks do, and they read what we write, and spread those words.

Using Less Memory means using less power on Tablet and other things. Whether that is true in most scenario, the media will love to pick on that.

Using less memory then competition could also be your main selling point.

Slim, Light Customizable and Elegant.

Responsiveness has NOTHING to do with memory usage, NO one , not even geeks or Enthusiast links the two together in any browser comparison. They are two different things. In the perfect world ,It should be extremely memory efficient, and extremely responsive all the time.

And since the latest meeting meant e10s are out or postponed, their should be a Responsiveness Progress like MemShrink as well.

Whilst the media may rarely link the two, as Nicholas mentions above, paging to disk affects responsiveness, so they still are related.

There will be 🙂 See…

https://groups.google.com/d/msg/mozilla.dev.planning/4q7SW89MjQY/7f1KConKZLEJ

Memory and responsiveness are related, because more memory you use, more difficult is to find new available memory, to check used memory, and even to use because more data is moved back and forth the cpu and the OS may even need to move the data to paging with a big slowdown.

I would be very happy if firefox would use even 2gb of my 4gb ram if it was always incredibly snappier with everything in cache or similar, from sqlite to web page but it can set like that (I think).

I’d like to lend my support to Ed. Memory is very important to me, and to everyone I work with. Much of our work is done through the browser (email, testing, research, reading documentation), and most of us have older hardware, with 2GB of RAM, and when Firefox uses up 50% or more of that, it starts to limit what I can do without swapping. So yes, I care about responsiveness, but about the responsiveness of my whole system, not just Firefox. Firefox is just one part of the whole, and its memory usage alone can impinge on system performance.

Please do not go chasing “responsiveness” alone before memory issues are firmly in hand.

Memory leak from Hotmail.

Maybe you would be interested in a users sumo post https://support.mozilla.com/en-US/questions/892214 Memory leak while using Hotmail . Using fx7.01 on an XP and leaving Hotmail running the user notes (my edit):

I have restarted FF with plugins disabled and used about:memory and got these stats:

After running FF for 5 minutes:

¦ +—2.80 MB (03.96%) — compartment(http://secure.shared.live.com/_D/F$Live….)

¦ +–108.97 MB (36.42%) After running 16 hours

¦ +–144.42 MB (38.79%) — 24 hours:

¦ +–181.51 MB (42.62%) — 40 hours:

and in another instance

¦ +–695.60 MB (51.94%) over a weekend

Maybe you or someone knowledgeable about this may be able to comment in the sumo thread, or here, as to whether it would be worth filing a bug for this behaviour, or is this considered acceptable behaviour from Firefox running webmail over longer periods of time ?

Thanks in advance for any advice.

I think the amount of memory used is very important. The browser is one of the few programs I actually *need* to keep open, no matter what I do (e.g. to read documentation). Firefox’s memory usage will routinely go above 400, and never recover (even though at startup it can use < 200 with 5+ tabs). This means that Firefox alone will tend to literally use a quarter of the physical memory I have available on this old 32-ibt machine (2 GB).

It tends to be assumed that "normal" users (whatever that means) only write emails and browse the web: that they actually ONLY need a web browser. This is just not true. The browser is often not the main tool, so it shouldn't take too much resources from the main work tool, whatever that may be (what I see in my student environment: CAD, image editing, statistical data processing, all very memory sensitive tasks).

I wouldn't complain if I didn't often have to close the browser just to be able to process a bigger statistical dataset or create a bigger plot.

I will say though that Firefox still seems to have the lowest memory usage of the browsers I tried (definitely better than Chrome).

This post started out with great news yet again. More progress! Then it went to hell. It seems that some of the old Mozilla attitude that memory efficiency is not critical has started to push through again. Ignore it I say! Firefox memory efficiency has been such an under-prioritized focus for Mozilla for so long, they cannot possibly think that there is any merit to killing to MemShrink momentum until there is very little room for improvement!

The principle behind this idea is sound though. I agree it’s not specifically about memory usage per say but about efficiency and performance. However memory bloat has been such a huge issue for Mozilla for so long, it’s the perfect place to start when addressing efficiency and performance. There should be no stopping until there’s less than 10% improvement to be gained.

Congratulations on your hard work in processing what seems like needless noise to get the signal right: metrics are key. Can’t change anything without quantifying it over time. Perceptions are too varied and vague whereas judging through metrics is much more solid.

Define “memory efficiency”, please.

Great question! I would say, right off the top of my head, that memory efficiency is the ability of a program to manage it’s memory without interfering with the program’s user experience.

This would include not randomly thrashing the hard drive and blocking UI when a user types text into the location bar, which still happens to me frequently. That’s just one example I guess.

Another example might be that a program should never exceed a certain ratio of available physical memory relative to – in the case of a browser – amount of tabs open. For example it appears that on every Windows machine I’ve used, the total amount of physical memory available is pretty much irrelevant. Once a single program takes up a disproportionately large amount of physical memory (such as Firefox often taking up over half of my memory), that program seems to inevitably spend a lot of time thrashing the pagefile/hard disk until it eventually becomes unusable and requires a restart. This is the experience I still have with Firefox to this very day.

Therefore I don’t really care what it takes, but memory efficiency should take into the realistic behaviour of an operating system for sure. Whilst an application may take up only 60% of available physical memory and the rest of the system takes up just another 10% – for argument sake – my real-world experience over the years suggests that despite the remaining 20% (before things get tight), that program occupying 60% of memory is already bogging down the system.

I would be more than happy to have a setting in Firefox that I could tune myself that would dump tab content from memory, leaving the tab open essentially as a glorified bookmark, until enough memory is freed up to keep the browser’s memory usage below X% percent of overall system memory. If dumping tab memory doesn’t do it, whatever other resource-releasing activity it possible should be undertaken.

Furthermore, memory efficiency should involve prioritizing certain core features of a program’s UI. As with my above example about the location bar, it is just not acceptable – for all the help the awesome bar provides – for it to randomly block UI and thrash the hard drive, simply to view history. A user should never be prevented from typing in a full url and hitting enter. If the awesome bar is not available, don’t show it until resources are available, rather than blocking the UI. Alternatively, show a super-low-resource consuming notification that the awesome isn’t available right now. If not those options, heavily prioritize the awesome bar’s memory allocation.

Additionally, it’s laughable that the people at the MemShrink meeting think that a focus on total memory usage (for mobiles) as well as memory efficiency, is worth pointing out. What have you got in the end? The same thing you started with: total memory usage AND memory efficiency are STILL the core objectives. How has that changed?

Finally, there is absolutely no way in hell they can realistically consider changing MemShrink’s focus or downgrading the effort when one of your announcements in this post is that Add-On memory leak has only barely been touched upon, as is indicated by the belated creation of a meta bug. Extensions are still the biggest single reason people I know stick with Firefox. Mozilla cannot seriously put the brakes on MemShrink, or send it in a different direction, when the investigation into Add-On memory issues has barely scratched the surface.

In latin america theres a feeling that memory has a relation with responsiveness, I always see on “army of awesome” many tweets that keeps saying “I hope they fix the memory leak problem” or “well I dont see a big diference in memory usage before 400MB now 399MB”, many times users compare the amount of memory firefox use and Memshirnk needs to take care of memory problems.

I can’t agreed more with pd “There should be no stopping until there’s less than 10% improvement to be gained.”

I use the mozmill stress test but it seems unreal to me because it start just open new tabs with just the front page for example gmail and facebook but I’m not logged in so many things that suppose to load, well doesn’t, there’s a way to try this test with my profile?

Add more memory reporters to SQLite and tweak the usage of SQLite. This causes most memory usage and UI hangs.

SQLite now has 100% memory reporting coverage.

Do you have data backing up this claim?

I already posted a bugzilla entry some time ago that I have very often UI hangs and the dumps always show that SQLite was the cause.

Also if you run expire, vacuum and statistic you can see a huge amout of heap-unclassified memory so SQLite is not fully covered by memory reporters.

Did you read my post? I said “Second, it makes the SQLite numbers in about:memory 100% accurate; previously SQLite was under-reporting its memory usage, sometimes significantly.”

SQLite runs the places DB that is the core of the AwesomeBar data store right? In that case, I personally don’t need data, I agree that the hangs the AwesomeBar often presents is a huge UI blocker.

A long time ago, FireFox used to be my default browser.

Then I turned to hate FireFox because it was bloaty and I ran a 3GB 32-bit Windows machine with Visual Studio, Office etc., all memory hogs running. I have been using Chrome for a long time because it was lean and fast. I even deleted FireFox from my quick-launch.

Not any more recently.

Ever since MemShrink started, after I awhile I ended up using FireFox much more, to the point that it is now my favourite browser again. I switched from FireFox to Chrome back to FireFox, why?

Because in Chrome I cannot open 10-20 tabs at the same time without it hogging all the memory of the machine and grinding it to a slow death. It is still the same (though less of an issue) since I switched to a 64-bit Windows 7 setup. However, recent Aurora versions of FireFox has no problems with loading tons of pages simultaneously and memory usage for lots of tabs is only a fraction of Chrome’s. Now I don’t even run Chrome any more.

So, in the Windows world, I think memory usage does impact performance a great deal. Judging from the fact that I returned from Chrome to FF means that you guys are doing something right. I’d say just keep doing it!

Me too.

In the past 18 months or so, I’ve done a complete loop through the major browsers on my MacBook: Safari -> Firefox -> Chrome -> Firefox.

Each time, I left for a new browser because of perceived speed and memory issues. Having returned to Firefox Beta in August, I have to say I’m very happy. The new rapid release schedule, JS engine improvements, and MemShrink provide me with a weekly sense of progress — Firefox is better every time I check! NoScript allows me to deal with the creepy components of Google/Facebook in a way Chrome can’t (or won’t).

So as Stephen say, keep up the great work. Firefox and this blog are awesome!

I think the MemShrink project has reached a lot of goals. Now Firefox is the leaner browser (apart from mini-browsers, like Lynx :D), above all for hardcore browsing :).

The work is continuing, the fact that now we should focus on responsiveness doesn’t mean it has stopped. For example there are the improvements in GC or the improvements in JavaScipt object shrinking (really really important). And, as you can see, the number of Memshrink bugs still opened is really high.

I think now memory reporters are a more important work, as they give the opportunity to not regress in future (and to continue improving memory usage). Another area of improvement should be memory usage in the mobile browser, we should consider the fact that mobile browsing is a bit different. We should use a really low amout of memory since the start, and this would improve also startup time (less memory allocations).

So, next project: ResponseShrink?

Are the problems with the leaking add-ons things that could be forcibly cleaned up at the browser level if a big enough hammer was applied, or are they issues that must (as opposed to should be) fixed by the add-on authors? Even if it’s inelegant forcing a cleanup at the browser level might be worth doing since 99% of the time people are going to blame their problems on Firefox even if an add-on is to blame.

Unfortunately, add-ons are able to embed their claws too deeply into Firefox to be forcibly cleaned up, AIUI. Even distinguishing memory use caused by add-ons is extremely difficult. (Those statements are much less true for add-ons that use the new add-on SDK — part of the reason it was developed — but most add-ons don’t.)

First, if I haven’t mentioned it before, reading your blog has renewed my faith in the future of Firefox. From your post today, I think it’s time you were assigned a team to get this work done – you’ve proven its merit.

I wouldn’t be too quick to give up on memory usage on 64-bit desktops. My main desktop currently has Firefox 7 on Fedora 15 with a few popular extensions and with a couple dozen tabs open it’s using a gig and a half of committed memory (half is unclassified on this version). A desktop PC with 1GB of RAM isn’t rare, and a 2GB machine is probably just standard. Using up 75% of a typical desktop for an average use case isn’t a great goal. As others mentioned already, many people will be sent into swap for this. There’s also the electricity cost of keeping extra RAM fresh (IIRC some architectures will sleep unused RAM). Consider the green aspect (or black if you’re counting tons of coal) as well of this work.

While I’m watching with fascination I can’t do much to help at this point. I read the Firebug leak bug, and there’s a fellow on there who has a tool that’s very useful for finding the source of leaks, but as far as I know there’s no easy way for me to run that, or even for the Firebug dev to run it.

I’d like to be able to see how much memory each of my tabs is using, so I can weigh content vs. leaks. I’ve often wished for a ‘top’ inside Firefox so I could find the tab that’s using all my memory or CPU.

I’d like to be able to see how much memory each of my extensions is using, so I can point fingers and test appropriately (I’ve just disabled Firebug for my daily browsing after reading that bug).

If these things are possible, they may be ways to democratize the workload and get some volunteers onboard to help lighten the load and perhaps change the direction of that trendline.

https://bugzilla.mozilla.org/show_bug.cgi?id=400120 is open for the per-tab memory usage. See the dependent bug 687724 — there’s actually a good chance this will happen, at least for JS memory usage. The per-compartment JS reporters in about:memory get you part of the way currently.

I’d like to be able to see how much memory each extension is using too. Unfortunately, for add-ons that don’t use the add-on SDK (which is most of them) it’s extremely difficult to distinguish add-on memory use from core browser memory use.

In terms of spreading the load, it’s hard for newbies to help… most of the important bugs need someone who already understands firefox’s code :/

Thanks for the reply last week and the mod to the post! Much appreciated.

Keep working on the on building a good set of reliable tools. Once that’s out of the way you can apply your expertise skills in a more focused way. The measuring/analysing tools should come first. There was a time when FF was constantly taking more than 1 GB to watch videos in Flash and over

1.5 GB for facebook. Now I can watch a video in Flash using less than 550 MB and Facebook being usable. Memshrink is an important project and should NOT be abandoned!

Keep up the grate work

Couldnt Test Pilot be the way to get somewhat realistic data on memory usage over time? I don’t know how much information Test Pilot can garner, but even just taking the averages of everyone’s memory and putting it on a graph over time to put on areweslimyet.com would be nifty to show the real-world (if a bit unscientific) graph over time of the decreasing memory usage. Granted addons and whatnot might make it less useful for benchmark purposes, but I feel like that sort of a thing would be better than any automated test you could do.

We get various memory stats on via telemetry. The hard part with doing comparisons over time is that it assumes that everyone’s workloads are consistent over time, which not true at all.

I don’t see why that would matter. You’re getting data from a lot of people at once. There should still be some sort of decreasing trend if the memory usage is actually getting better.

Like i said, that may make it less useful for targeting specific things since the data needs to be en mass for it to have a meaning (at least how i see it), but i think it might be useful just for something like “Oh we did this fix, and were hoping it would give x improvement, but then you see 0 improvement at all….idk i just feel like it would be more useful than not having it, if only to have a tangible way to see improvement for areweslimyet

I love the work and attention Mozilla is giving to reducing Firefox’s memory usage.

I’m not really sure if I agree with you that people “don’t really care how many MB of memory Firefox is using”, I think a lot of tech users do really care. But more importantly I totally agree on the responsiveness part. Yesterday I used Chrome to test a website and was amazed it was running so damn fast. Almost right after I clicked a link the new page was rendered. Chrome on Windows in a VirtualBox on OS X was much faster than Firefox on OS X directly with the same website and link. The click event handling in Firefox always felt kind of laggy to me, and I think the total browsing experience suffers from it.

Keep up the good work and it’s nice to read you’re moving the focus to a lean browsing experience instead of memory usage alone.

I count 4 P1 bugs instead of 3: the one of Peter van Beken is also a P1 (beside the 3 on top).

Nick not only this week did we find out that Mozilla is starting to circle the MemShrink effort, looking for ways to corrupt it, but I just found out about Jetpack Features:

In essence, considering that – as you’ve admitted – Mozilla is clueless about measuring Add-On memory usage, doesn’t the idea of turning core Firefox features into Jetpacks essentially equate to potentially contradicting everything MemShrink has achieved to date?

Call me paranoid but this sounds like the week I’ve been dreading: the week when yet more strange strategic direction changes have emerged that appear to threaten MemShrink.

Am I wrong?

As Nick noted above, it’s addons that don’t use the addon SDK that cause problems for reporting memory usage. If the Jetpacks are all written using it I don’t think it should be a problem.

Yes, I think you’re wrong.

1) Memory reporters are a great feature to continue improving memory usage and to avoid regressions. As Nicholas says “we can’t make improvements without measurements”. There are still bugs which need memory reporters, where heap-unclassified is 90%.

2) MemShrink isn’t stopped. There are a lot of bugs still opened (216, as you can see from the blog post), there are a lot of new bug reports and there are a lot of important things to be done (like GC improvements and JavaScript Object shrinking, above all) that will likely improve not only memory usage, but also responsiveness.

3) The aim to improve responsiveness will likely improve also memory usage, because they are somewhat tied. For example if we stop using SQLite databases where not needed, we will also use less memory.

Mozilla has done a lot in this field, now Firefox is the leanest browser. The next goal is to improve responsiveness, that is more important for users.

“not only this week did we find out that Mozilla is starting to circle the MemShrink effort, looking for ways to corrupt it”

You’re sounding paranoid and silly now.

Based on Mozilla’s track record, I don’t think that’s fair.

Anyway, as they say, just because I’m paranoid doesn’t mean I wrong 🙂

I may have expressed myself poorly but essentially what I was trying to say is that any threat to MemShrink’s focus and momentum – especially before someone at Mozilla finally understood my perspective regarding add-on memory issues – was very worrying to say the least.

Justin (?) Lebar needs to don the lycra, wear his undies on the outside and start learning his trapeze so he can take over the superhero chief lizard wrangler’s role.

It’s incredible that it took 7 years of Firefox, plus the preceding gestation period of the product, for someone to sit back and realize that blindly opening up browser chrome to extensions – without an appropriate plan for the memory issues this could generate – was not such a good approach. That this realization seemingly has not come from the leadership at Mozilla indicates that there’s a problem ‘upstairs’. Mozilla has the resources and made the initial oversight so it is Mozilla that needs to take responsibility for handling the problem.

Today is a great day, Vote Lebar for Prime Minister! 🙂

I like the plot of open-and-memshrink-tagged bugs over time. It might be more useful to plot total-memshrink-tagged bugs over time and closed-memshrink-tagged bugs over time.

I care a ton about how much memory Firefox is using. Partly that’s because of its leak history; I have to keep an eye on it to make sure it’s functioning well. But because I multitask to a ridiculous degree, it’s something I’m always gonna care about regardless.

Just to go off on a rant here, I just upgraded to the 9 beta and it disabled my theme without asking me. I use Add-On Compatibility Checker, but apparently I didn’t even need it for this because my theme (Qute 3++ (custom mod) 1.53) is already marked as compatible. It’s reenabled now, but that was just an annoyance I wasn’t prepared to deal with. I’m already kinda pissed at the appearance department for how badly personas are implemented (no local storage, ability to work with other themes disabled), something they haven’t bothered to fix since 3.6. I’m glad the Memshrink team is on the ball, but somebody needs to give the appearance team a good kick in the head. Good gads.

I should mention on the positive side, memory use doesn’t seem to have been much impacted by type inference as far as I can tell yet (though this is still early in the session), but responsiveness is noticeably better.

Update on FF9: I noticed that while memory growth seems to be a little less than it is in 8.0, virtual memory (as reported by the task manager) seems to be growing at the rate it did in 8. In 8 they stayed more or less in sync; in 7 the virtual memory could grow a little faster. The situation is still an incremental improvement, but it’s weird.

I’m a huge fan of both the MemShrink project, and these regular updates—it creates a sense of progress but also accountability; the fact that you provide updates means that we users can feel more confident that our needs aren’t being ignored. So thank you!

But further on that note, would it be possible/feasible to add a graph of measured progress, e.g. total memory consumption for one or a few typical workloads? Bug counts are interesting, and specific details extremely so, but I think it would be truly valuable to have these reports regularly chart the overall progress of the memory-shrinking task. With 21 weeks and so 21 relevant data points (or 21 per tested workload), I’d be curious to see it.

I’m not sure there’s a reasonable metric they could come up with aside from a benchmark, but a memory benchmark won’t provide the whole picture because some bugs are exacerbated or caused based on the pages being visited or add-ons in use. I’d love a graph too just for the sake of a quick look, but if it existed it probably wouldn’t tell me a thing about what I could expect from my own usage pattern.

pd:

“Extensions are still the biggest single reason people I know stick with Firefox. Mozilla cannot seriously put the brakes on MemShrink, or send it in a different direction, when the investigation into Add-On memory issues has barely scratched the surface.”

—

This is my thought _exactly_ !! I’m starting to use Chrome a little bit more, it’s faster but I really, really like Firefox’s selection of addons.

My favorite is Tab Mix Plus, I use it everyday, I love being able to use the session manager with it. It’s much, much better than the Chrome’s Session Manager 3.3.0

Even with my Win7 x64 and 4GB ram, I do run into memory problems from time to time.

Eventually addons for Chrome will get better, if Firefox doesn’t keep down the MemShrink and responsiveness path you will loose a lot more marketshare. It’s really as simple as that.

I love Firefox though, I’m committed to FF 8.0 Final, but it would of been much easier if I would of just stuck to FF 3.6 through these last months. I am going to continue to install the FF Beta and Aurora ones (FF Aurora 10.0a2 tonight) though in Sandboxie to test out like I usually do.

Thanks for keeping us updated on the progess.