When I first joined the webdev group at Mozilla I was a Mercurial refugee who had never used git or github. I was always daunted by git and suddenly I had to learn it really fast! Fast forward to today and I can’t imagine working on a highly collaborative project without git or github. Here is the workflow we use for the addons.mozilla.org project. I highly recommend it and I’ll summarize exactly why at the end. It’s pretty similar to how I’ve heard a lot of teams work but has some subtle differences.

Using topic branches

The first thing I do is sync up with master and create a topic branch for my new feature or bug fix:

git checkout master

git pull

git checkout -b add-email-to-install

Now I have a branch I can commit code into without affecting master. Git checkout makes it super easy to switch between branches in the same repository clone if I’m multi-tasking or applying hot fixes. In addition to git checkout, you can also use git stash to switch tasks.

Commit messages

It’s really important to write a well-formed git commit message. We always include a ticket number into bugzilla, our tracker, so that anyone can get the full back story about a change.

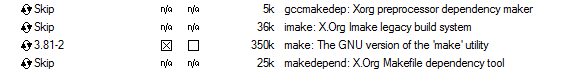

Ask for a code review

Once I’ve added my feature with passing tests I commit my changes, push to my personal fork of the repository, and ask someone on my team to review the code. On addons.mozilla.org we just ping each other in IRC with a link to the commit or a link to the compare view. If no one is around we submit a pull request.

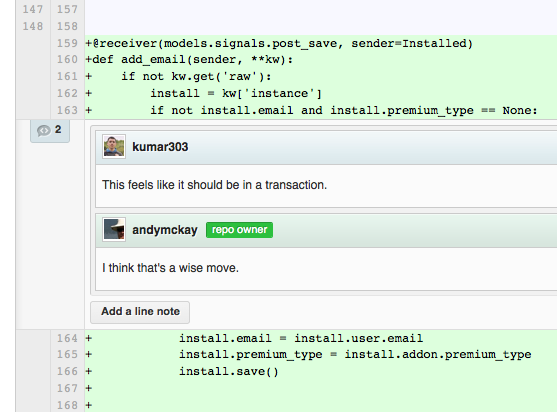

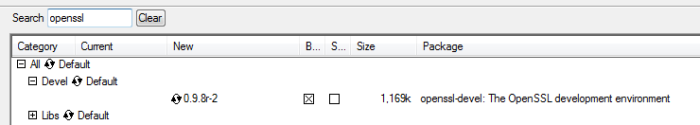

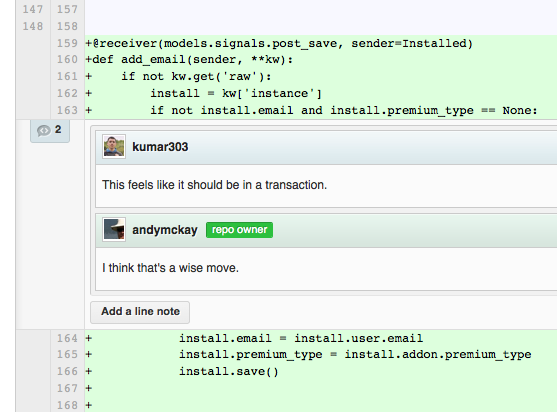

Github has a sweet interface where you can write comments directly on the diff, like this:

Whoops, another change is needed based on feedback from the code review.

Fixing up the topic branch

The nice thing about working in a topic branch is it’s isolated from master and no one else is tracking that branch so I can use git rebase to create the best commit before merging into master. Let’s say I have some commits on my branch like this:

$ git log --pretty=oneline -2

825d662cc69774e412119e1eb7ae0900c29d89a0 Fix: put code in a transaction

31378788f321b46f5e27f9fb51bdd19365636871 Adds email to the install record (bug #NNNNNN)

What I really want is to combine those two commits into one. I can do that with git rebase —interactive. I type:

git rebase -i HEAD~2

Then I’ll get a prompt for rebasing my last two changes:

pick 3137878 Adds email to the install record (bug #NNNNNN)

pick 825d662 Fix: put code in a transaction

# Rebase 194b59d..825d662 onto 194b59d

#

# Commands:

# p, pick = use commit

# r, reword = use commit, but edit the commit message

# e, edit = use commit, but stop for amending

# s, squash = use commit, but meld into previous commit

# f, fixup = like "squash", but discard this commit's log message

# x, exec = run command (the rest of the line) using shell

#

# If you remove a line here THAT COMMIT WILL BE LOST.

# However, if you remove everything, the rebase will be aborted.

#

If I put the word fixup next to my second commit, it folds it into the first:

pick 3137878 Adds email to the install record (bug #NNNNNN)

fixup 825d662 Fix: put code in a transaction

Now I have one commit (it’s actually a new commit) that contains all of my changes:

$ git log --pretty=oneline -1

c7846808c8296dd49d49612101aaed7cdfd6d220 Adds email to the install record (bug #NNNNNN)

Pretty slick, right?

Typically you’d want to wait until everyone has had a chance to review your code before you start rebasing. However, git pull requests do handle rebased changes. You can push -f to your own fork and the pull request will remove the old commits from the conversation and add the new ones at the bottom.

Merge into master

When my changes are ready, I can merge my branch back into master. However, I don’t need to make a merge commit if there’s only one commit to merge in. That would clutter up the logs. I can do this with a fast-forward merge:

git checkout master

git merge --ff add-email-to-install

Now I can close the ticket in our tracker with a direct link to my changes.

Sometimes I might actually make multiple commits on a single topic branch. In this case I would want to retain the automatic merge commit. That is, I wouldn’t do a fast forward merge in the case of multiple commits:

git merge --no-ff add-email-to-install

I can then close the ticket with a link to the single merge commit that shows all changes introduced by the branch.

Fixups, for ninjas

If you follow this pattern you’ll become accustomed to frequently fixing up your topic branch. I created a ninja alias for it in ~/.gitconfig like this:

[alias]

...

fix = "!f() { git commit -a -m \"fixup! $(git log -1 --pretty=format:%s)\" && git rebase -i --autosquash HEAD~4; }; f"

When on a topic branch with uncommitted changes I can then type:

git fix

That will automatically commit my change and pre-configure the rebase prompt to fold it into the last commit.

UPDATE: As pointed out in the comments, a quicker and simpler way to fix up and rebase just the last commit (i.e. not multiple commits) is:

[alias]

...

fix = "commit -a --amend -C HEAD"

Synchronization with master, for ninjas

If you’re on a project that has a lot of commit activity you’ll probably want to rebase your feature branch on top of master often. I added a ninja alias to ~/.gitconfig for that too:

[alias]

...

sync = "!f() { echo Syncing $1 with master && git checkout master && git pull && git checkout $1 && git rebase master; }; f"

When I’m on my feature branch and I want to synchronize it with all the latest changes on master, I type:

git sync add-email-to-install

The main benefit to syncing a branch before merging into master is that a fast-forward merge won’t create a new commit. This helps you safely delete work branches later on since it won’t look like you have un-merged changes. It’s also useful to do a last minute spot check before merging into master: do the tests still pass? do I need to adjust my SQL migration script? etc.

UPDATE: Fernando Takai posted a simpler version of this in the comments using git checkout - to go back to the last branch you were on. You can then simply type git sync from the branch. Thanks!

Why resort to all these ninja like git strategies?

- Using git blame on a single line of code is more likely to give your team a full picture of all the reasons why that line of code was introduced. For this same reason, we at addons.mozilla.org always link to our bug tracker in each commit.

- Your commit log will have a high signal to noise ratio making it easier to skim when looking at a compare view between releases.

- Ninjas don’t make mistakes. Ever.

Random Notes

- Kernel hackers frown on using rebase but that’s probably because many people are committing to the same files and it’s important to see what the original starting tree was when work on a new feature started. For web development, if two members on your team are working on the same line in the same file then your team isn’t communicating well enough. I rarely see conflicts on my team that aren’t resolved automatically by a three way merge.

- After committing to master you might discover a mistake. That’s fine, make a new commit. Be sure to never fixup a commit on master because everyone tracking master will be sad!

- Where do your fixed up commits go? They are still there but are detached from any branch and thus get deleted eventually by git’s garbage collector.