We want to make Firefox load pages as fast as possible, to make sure that you can get all the goods from the loaded pages available as soon as possible on your screen.

The JavaScript Startup Bytecode Cache (JSBC) is an optimization made to improve the time to fully load a page. As with many optimizations, this is a trade-off between speed and memory. In exchange for faster page-load, we store extra data in a cache.

The Context

When Firefox loads a web page, it is likely that this web page will need to run some JavaScript code. This code is transferred to the browser from the network, from the network cache, or from a service worker.

JavaScript is a general purpose programming language that is used to improve web pages. It makes them more interactive, it can request dynamically loaded content, and it can improve the way web pages are programmed with libraries.

JavaScript libraries are collections of code that are quite wide in terms of scope and usage. Most of the code of these libraries is not used (~70%) while the web page is starting up. The web page’s startup lasts beyond the first paint, it goes beyond the point when all resources are loaded, and can even last a few seconds longer after the page feels ready to be used.

When all the bytes of one JavaScript source are received we run a syntax parser. The goal of the syntax parser is to raise JavaScript syntax errors as soon as possible. If the source is large enough, it is syntax parsed on a different thread to avoid blocking the rest of the web page.

As soon as we know that there are no syntax errors, we can start the execution by doing a full parse of the executed functions to generate bytecode. The bytecode is a format that simplifies the execution of the JavaScript code by an interpreter, and then by the Just-In-Time compilers (JITs). The bytecode is much larger than the source code, so Firefox only generates the bytecode of executed functions.

The Design

The JSBC aims at improving the startup of web pages by saving the bytecode of used functions in the network cache.

Saving the bytecode in the cache removes the need for the syntax-parser and replaces the full parser with a decoder. A decoder has the advantages of being smaller and faster than a parser. Therefore, when the cache is present and valid, we can run less code and use less memory to get the result of the full parser.

Having a bytecode cache however causes two problems. The first problem concerns the cache. As JavaScript can be updated on the server, we have to ensure that the bytecode is up to date with the current source code. The second problem concerns the serialization and deserialization of JavaScript. As we have to render a page at the same time, we have to ensure that we never block the main loop used to render web pages.

Alternate Data Type

While designing the JSBC, it became clear that we should not re-implement a cache.

At first sight a cache sounds like something that maps a URL to a set of bytes. In reality, due to invalidation rules, disk space, the mirroring of the disk in RAM, and user actions, handling a cache can become a full time job.

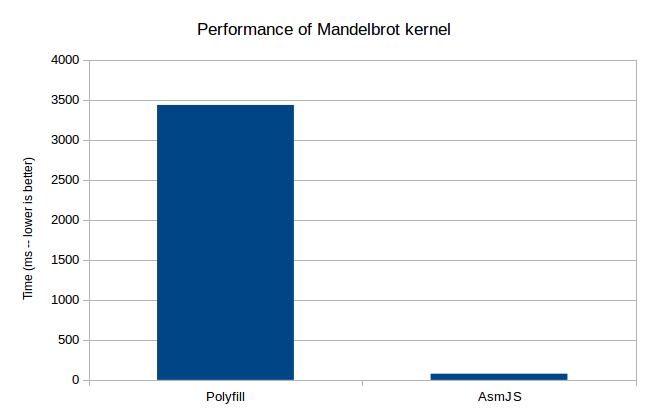

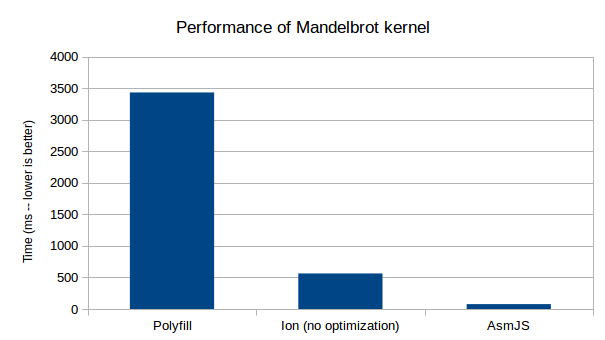

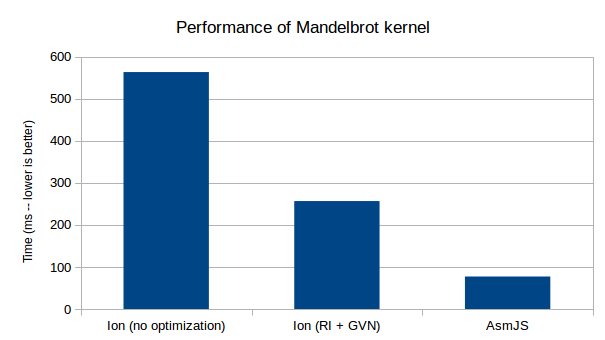

Another way to implement a cache, as we currently do for Asm.js and WebAssembly, is to map the source content to the decoded / compiled version of the source. This is impractical for the JSBC for two reasons: invalidation and user actions would have to be propagated from the first cache to this other one; and we need the full source before we can get the bytecode, so this would race between parsing and a disk load, which due to Firefox’s sandbox rules will need to deal with interprocess communication (IPC).

The approach chosen for the JSBC wasn’t to implement any new caching mechanism, but to reuse the one already available in the network cache. The network cache is usually used to handle URLs except those handle by a service worker or those using some Firefox internal privileged protocols.

The bytecode is stored in the network cache alongside the source code as “alternate content”; the user of the cache can request either one.

To request a resource, a page that is sandboxed in a content process creates a channel. This channel is then mirrored through IPC in the parent process, which resolves the protocol and dispatches it to the network. If the resource is already available in the cache, the cached version is used after verifying the validity of the resource using the ETag provided by the server. The cached content is transferred through IPC back to the content process.

To request bytecode, the content process annotates the channel with a preferred data type. When this annotation is present, the parent process, which has access to the cache, will look for an alternate content with the same data type. If there is no alternate content or if the data type differs, then the original content (the JavaScript source) is transferred. Otherwise, the alternate content (the bytecode) is transferred back to the content process with an extra flag repeating the data type.

To save the bytecode, the content process has to keep the channel alive after having requested an alternate data type. When the bytecode is ready to be encoded, it opens a stream to the parent process. The parent process will save the given stream as the alternate data for the resource that was initially requested.

This API was implemented in Firefox’s network stack by Valentin Gosu and Michal Novotny, which was necessary to make this work possible. The first advantage of this interface is that it can also be implemented by Service Workers, which is currently being added in Firefox 59 by Eden Chuang and the service worker team. The second advantage of this interface is that it is not specific to JavaScript at all, and we could also save other forms of cached content, such as decoded images or precompiled WebAssembly scripts.

Serialization & Deserialization

SpiderMonkey already had a serialization and deserialization mechanism named XDR. This part of the code was used in the past to encode and decode Firefox’s internal JavaScript files, to improve Firefox startup. Unfortunately, XDR serialization and deserialization cannot handle lazily-parsed JavaScript functions and block the main thread of execution.

Saving Lazy Functions

Since 2012, Firefox parses functions lazily. XDR was meant to encode fully parsed files. With the JSBC, we need to avoid parsing unused functions. Most of the functions that are shipped to users are either never used, or not used during page startup. Thus we added support for encoding functions the way they are represented when they are unused, which is with start and end offsets in the source. Thus unused functions will only consume a minimal amount of space in addition to that taken by the source code.

Once a web page has started up, or in case of a different execution path, the source might be needed to delazify functions that were not cached. As such, the source must be available without blocking. The solution is to embed the source within the bytecode cache content. Instead of storing the raw source, the same way it is served by the network cache, it is stored in UCS2 encoding as compressed chunks¹, the same way we represent it in memory.

Non-Blocking Transcoding

XDR is a main-thread only blocking process that can serialize and deserialize bytecode. Blocking the main thread is problematic on the web, as it hangs the browser, making it unresponsive until the operation finishes. Without rewriting XDR, we managed to make this work such that it does not block the event loop. Unfortunately, deserialization and serialization could not both be handled the same way.

Deserialization was the easier of the two. As we already support parsing JavaScript sources off the main thread, decoding is just a special case of parsing, which produces the same output with a different input. So if the decoded content is large enough, we will transfer it to another thread in order to be decoded without blocking the main thread.

Serialization was more difficult. As it uses resources handled by the garbage collector, it must remain on the main thread. Thus, we cannot use another thread as with deserialization. In addition, the garbage collector might reclaim the bytecode of unused functions, and some objects attached to the bytecode might be mutated after execution, such as the object held by the JSOP_OBJECT opcode. To work around these issues, we are incrementally encoding the bytecode as soon as it is created, before its first execution.

To incrementally encode with XDR without rewriting it, we encode each function separately, along with location markers where the encoded function should be stored. Before the first execution we encode the JavaScript file with all functions encoded as lazy functions. When the function is requested, we generate the bytecode and replace the encoded lazy functions with the version that contains the bytecode. Before saving the serialization in the cache, we replace all location markers with the actual content, thus linearizing the content as if we had produced it in a single pass.

The Outcome

The Threshold

The JSBC is a trade-off between encoding/decoding time and memory usage, where the right balance is determined by the number of times the page is visited. As this is a trade-off, we have the ability to choose where to set the cursor, based on heuristics.

To find the threshold, we measured the time needed to encode, the time gained by decoding, and the distribution of cache hits. The best threshold is the value that minimizes the cost function over all page loads. Thus we are comparing the cost of loading a page without any optimization (x1), the cost of loading a page and encoding the bytecode (x1.02 — x1.1), and the cost of decoding the bytecode (x0.52 — x0.7). In the best/worst case, the cache hit threshold would be 1 or 2, if we only considered the time aspect of the equation.

As a human, it seems that we should not penalize the first visit of a website and save content in the disk. You might read a one time article on a page that you will never visit again, and saving the cached bytecode to disk for future visits sounds like a net loss. For this reason, the current threshold is set to encode the bytecode only on the 4th visit, thus making it available on the 5th and subsequent visits.

The Results

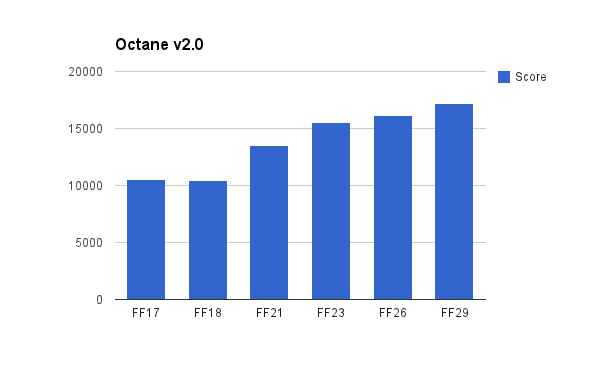

The JSBC is surprisingly effective, and instead of going deeper into explanations, let’s see how it behaves on real websites that frequently load JavaScript, such as Wikipedia, Google Search results, Twitter, Amazon and the Facebook Timeline.

This graph represents the average time between the start of navigation and when the onload event for each website is fired, with and without the JavaScript Startup Bytecode Cache (JSBC). The error bars are the first quartile, median and third quartile values, over the set of approximately 30 page loads for each configuration. These results were collected on a new profile with the apptelemetry addon and with the tracking protection enabled.

While this graph shows the improvement for all pages (wikipedia: 7.8%, google: 4.9%, twitter: 5.4%, amazon: 4.9% and facebook: 12%), this does not account for the fact that these pages continue to load even after the onload event. The JSBC is configured to capture the execution of scripts until the page becomes idle.

Telemetry results gathered during an experiment on Firefox Nightly’s users reported that when the JSBC is enabled, page loads are on average 43ms faster, while being effective on only half of the page loads.

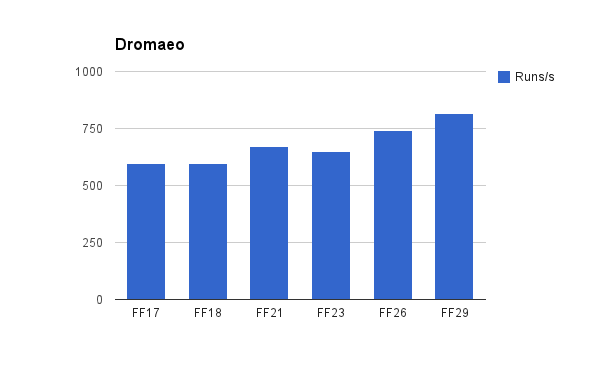

The JSBC neither improves benchmarks nor regresses them. Benchmarks’ behaviour does not represent what users actually do when visiting a website — they would not reload the pages 15 times to check the number of CPU cycles. The JSBC is tuned to capture everything until the pages becomes idle. Benchmarks are tuned to avoid having an impatient developer watching a blank screen for ages, and thus they do not wait for the bytecode to be saved before starting over.

Thanks to Benjamin Bouvier and Valentin Gosu for proofreading this blog post and suggesting improvements, and a special thank you to Steve Fink and Jérémie Patonnier for improving this blog post.

¹ compressed chunk: Due to a recent regression this is no longer the case, and it might be interesting for a new contributor to fix.