(“This Week in Glean” is a series of blog posts that the Glean Team at Mozilla is using to try to communicate better about our work. They could be release notes, documentation, hopes, dreams, or whatever: so long as it is inspired by Glean. You can find an index of all TWiG posts online.)

I’ve written before about data, but never tackled the business perspective. To a business, what is data? It could be considered an asset, I suppose: a tool, like a printer, to make your business more efficient.

But like that printer and other assets, data has a cost. We can quite easily look up how much it costs to store arbitrary data on AWS (less than 2.3 cents USD per GB per month) but that only provides the cost of the data at rest. It doesn’t consider what it took for the data to get there or how much it costs to be useful once it’s stored.

So let’s imagine that you come across a decision that can only be made with data. You’ve tried your best to do without it, but you really do need to know how many Live Bookmarks there are per Firefox profile… maybe it’s in wide use and we should assign someone to spruce it up. Maybe almost no one uses it and so Live Bookmarks should be removed and instead become a feature provided by extensions.

This should be easy, right? Slap the number into an HTTP payload and send it to a Mozilla-controlled server. Then just count them all up!

As one of the Data Organization’s unofficial mottos puts it: Counting is Harder Than It Looks.

Let’s look at the full lifecycle of the metric from ideation and instrumentation to expiry and deletion. I’ll measure money and time costs, being clear about the assumptions guiding my estimates and linking to sources where available.

For a rule of thumb, time costs are $50 per hour. Developers and Managers and PMs cost more than $100k per year in total compensation in many jurisdictions, and less in many others. Let’s go with this because why not. I considered ignoring labour costs altogether because these people are doing their jobs whether they’re performing their part in this collection or not… but that’s assuming they have the spare capacity and would otherwise be doing nothing. Everyone I talk to is busy, so everyone’s doing this data collection work instead of something else they could be doing: so there is an opportunity cost.

Fixed costs, like the cost of building and maintaining a data collection library, data collection pipeline, bug trackers, code review tooling, dev computers are all ignored. We could amortize that per data collection… but it’d probably work out to $0 anyway.

Also, for the purposes of measuring data we’re counting only the size of the data itself (the count of the number of Live Bookmarks). To be more complete we’d need to amortize the cost of sending the data point (HTTP headers, payload metadata, the data point’s identifier, etc.) and factor in additional complexity (transfer encoding, compression, etc.). This would require a lot of words, and in the present Firefox Telemetry system this amortizes to 0 because the “main” ping has many data points in it and gzip compression is pretty good.

Also, this is a Best Case Estimate. I make many assumptions small in order to make this a lower-bound cost if everything goes according to plan and everyone acts the way they should.

Ideation – Time: 30min, Cost: $25

How long does it take you to figure out how to measure something? You need to know the feature you’re measuring, the capabilities of the data collection library you’re using to do the measuring, and some idea of how you’ll analyse it at the other end. If you’re trying to send something clever like the state of a customizable UI element or do something that requires custom analysis, this will take longer and take more people which will cost more money.

But for our example we know what we’re collecting: numbers of things. The data collection library is old and well understood. The analysis is straightforward. This takes one person a half hour to think through.

Instrumentation – Time: 60min, Cost: $50

Knowing the feature is not the same as knowing the code. You need a subject matter expert (developer who knows the feature and the code as well as the data collection library’s API) to figure out on exactly which line of code we should call exactly what method with exactly which count. If it’s complicated, several people may need to meet in order to figure out what to do here: are the input event timestamps the same format on Windows and Mac? Does time when the computer is asleep count or not?

For our example we have questions: Should we count the number of Live Bookmarks in the database? The number in the Bookmark Menu? The Bookmark Toolbar? What if the user deletes one, should we count before or after the delete?

This is small enough that we can find a single subject matter expert who knows it all. They read some documentation, make some decisions, write some code, and take an hour to do this themselves.

Review – Time: 30min, Cost $25

Both the code and the data collection need review. The simplicity of the data collection and the code make this quick. Mozilla’s code review tooling helps a lot here, too. Though it takes a day or two for the Module Peer and the Data Steward to find time to get to the reviews, it only takes a combination of a half hour for them to okay it to ship.

Storage (user) – Cost: $0

Data takes up space. Its definition takes up some bytes in the Firefox binary that you installed. It takes up bytes in your computer’s memory. It takes up bytes on disk while it waits to be sent and afterwards so you can look at it if you type about:telemetry into your address bar. (Try it yourself!)

The marginal cost to the user of the tens of bytes of memory and disk from our single number of Live Bookmarks is most accurately represented as a zero not only because memory and disk are excitingly cheap these days but also because there was likely some spare capacity in those systems.

Bandwidth (user) – Cost: $0.00 (but not zero)

Data requires network bandwidth to be reported, and network bandwidth costs money. Many consumer plans are flat-rate and so the marginal cost of the extra bytes is not felt at all (we’re using a little of the slack), so we can flatten this to zero.

But hey, let’s do some recreational math for fun! (We all do this in our spare time, right? It’s not just me?)

If we were paying per-byte and sending this from a smartphone, the first GB in Canada (where mobile data makes the most money for the service providers in the world) costs $30 per month. That’s about 3 thousandths of a cent per kilobyte.

The data collection is a number, which is about 4 bytes of data. We send it about three times per day and individual profiles are in use by Firefox on average 12 days a month (engagement ratio of 0.4). (If you’re interested, this is due to a bunch of factors including users having multiple profiles at school, work, and home… but that’s another blog post).

4 bytes x 3 per day x 12 days in a month ~= 144 bytes per month

Thus a more accurate cost estimate of user bandwidth for this data would be 4 ten-thousandths of a cent (in Canadian dollars). It would take over 200 years of reporting this figure to cost the user a single penny. So let’s call it 0 for our purposes here.

Though… however close the cost is to 0, It isn’t 0. This means that, over time and over enough data points and over our full Firefox population, there is a measurable cost. Though its weight is light when it is but a single data point sent infrequently by each of our users, put together it is still hefty enough that we shouldn’t ignore it.

Bandwidth (Mozilla) – Cost: $0

Internet Service Providers have a nice gig: they can charge the user when the bytes leave their machine and charge Mozilla when the bytes enter their machine. However, cloud data platform providers (Amazon’s AWS, Google’s GCP, Microsoft’s Azure, etc) don’t charge for bandwidth for the data coming into their services.

You do get charged for bandwidth _leaving_ their systems. And for anything you do _on_ their systems. If I were feeling uncharitable I guess I’d call this a vendor lock-in data roach motel.

At any rate, the cost for this step is 0.

Pipeline Processing – Cost: $15.12

Once our Live Bookmarks data reaches the pipeline, there’s a few steps the data needs to go through. It needs to be decompressed, examined for adherence to the data schema (malformed data gets thrown out), and a response written to the client to tell it that we received it all okay. It needs to be processed, examined, and funneled to the correct storage locations while also being made available for realtime analysis if we need it.

For our little 4-byte number that shouldn’t be too bad, right?

Well, now that we’re on Mozilla’s side of the operation we need to consider the scale. Just how many Firefox profiles are sending how many of these numbers at us? About 250M of them each month. (At time of writing this isn’t up-to-date beyond EOY2019. Sorry about that. We’re working on it). With an engagement ratio of about 0.4, data being sent about thrice a day, and each count of Live Bookmarks taking up 4 bytes of space, we’re looking at 12GB of data per month.

At our present levels, ingestion and processing costs about $90 per TB. This comes out to $1.08 of cost for this step, each month. Multiplied by 14 “months”, that’s $15.12.

About Months

In saying “14 months” for how long the pipeline needs to put up with the collection coming from the entire Firefox population I glossed over quite a lot of detail. The main piece of information is that the default expiry for new data collections in Firefox is five or six Firefox versions (which should come out to about six months).

However, as I’ve mentioned before, updates don’t all happen at once. Though we have about 90% of the Firefox population within 3 versions of up-to-date at any one time, there’s a long tail of Firefox profiles from ancient versions sending us data.

To calculate 14 months I looked at the total data collection volumes for five versions of Firefox: Firefox 69-73 (inclusive). This avoids Firefox ESR 68 gumming up the works (its support lifetime is much longer than a normal release, and we’re aiming for a best-case cost estimate) and is both far enough in the past that Firefox 69 ought to be winding down around now _and_ is recent enough that we’ll not have thrown out the data yet (more on retention periods later) and it is closer in behaviour to releases we’re doing this year.

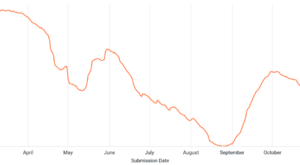

Here’s what that looks like:

So I said this was far enough in the past that Firefox 69 ought to be winding down around now? Well, if you look really closely at the bottom-right you might be able to see that we’re still receiving data from users still on that Firefox version. Lots of them.

But this is where we are in history, and I’m not running this query again (it only cost 15 cents, but it took half an hour), so let’s do the math. The total amount of data received from these five releases so far divided by the amount of data I said above that the user population would be sending each month (12GB) comes out to about 13.7 months.

To account for the seriously-annoying number of pings from those five versions that we presumably will continue receiving into the future, I rounded up to 14.

Storage (Mozilla) – Cost: $84

Once the data has been processed it needs to live somewhere. This costs us 2 cents per gigabyte stored, per month we decide to store it. 12GB per month means $0.24, right?

Well, no. We don’t have a way to only store this data for a period of time, so we need to store it for as long as the other stuff we store. For year-over-year forecasting we retain data for two years plus one month: 25 months. (Well, we presently retain data a bit longer than that, but we’re getting there.) So we need to take the 12GB we get each month and store it for 25 months. When we do that for each of the 14 “months” of data we get:

12GB/”month” x 14 “months” x $0.02 per GB per month x 25 months retention = $84

Now if you think this “2 cents per GB” figure is a little high: it is! We should be able to take advantage of lower storage costs for data we don’t write to any more. Unfortunately, we do write to it all the time servicing Deletion Requests (which I’ll get to in a little bit).

Analysis (Mozilla) – Time: 30min, Cost: $25.55

Data stored on some server someplace is of no use. Its value is derived through interrogating it, faceting its aggregations across interesting dimensions, picking it apart and putting it back together.

If this sounds like processing time Mozilla needs to pay for, you are correct!

On-demand analyses in Google’s BigQuery cost $5 per TB of data scanned. Mozilla’s spent some decent time thinking about query patterns to arrange data in a way that minimizes the amount of data we need to look at in any given analysis… but it isn’t perfect. To deliver us a count of the number of Live Bookmarks across our user base we’re going to have to scan more than the 12GB per month.

But this is a Best Case Estimate so let’s figure out how much a perfect query (one that only had to scan the data we wanted to get out of it) would cost:

12GB / 1000GB/TB * 5 $/TB = $0.06

That gives you back a sum of all the Live Bookmarks reported from all the Firefox profiles in a month. The number might be 5, or 5 million, or 5 trillion.

In other words, the number is useless. The real question you want to answer is “How much is this feature used?” which is less about the number of Live Bookmarks reported than it is Live Bookmarks stored per Firefox profile. If the 5 million Live Bookmarks are five thousand reports of 1000 Live Bookmarks all from one fellow named Nick, then we shouldn’t be investing in a feature used by one person, however much that one person uses it.

If the 5 million Live Bookmarks are one hundred thousand profiles reporting various handfuls of times a moderate number of bookmarks, then Live Bookmarks is more likely a broadly-used feature and might just need a little kick to be used even more.

So we need to aggregate the counts per-client and then look at the distribution. We can ask, over all the reports of Live Bookmarks from this one Firefox profile, give us the maximum number reported. Then show us a graph (like this). A perfect query of a month’s data will not only need to look at the 12GB of the month’s Live Bookmarks count, but also the profile identifier (client_id) so we can deduplicate reports. That id is a UUID and is represented as a 36-byte string. This adds another 8x data to scan compared to the 4B Live Bookmarks count we were previously looking at, ballooning our query to 108GB and our cost to $0.54.

But wait! We’re doing two steps: one to crunch these down to the 250M profiles that reported data that month and then a second to count the counts (to make our graph). That second step needs to scan the 250M 4B “maximum counts”, which adds another half a cent.

So our Best Case Estimate for querying the data to get the answer to our question is: $0.55 cents (I rounded up the half cent).

But don’t forget you need an analyst to perform this analysis! Assuming you have a mature suite of data analysis tooling, some rigorous documentation, and a well-entrenched culture of everyone helping everyone, this shouldn’t take longer than a half-hour of a single person’s time. Which is another $25, coming to a grand total of $25.55.

Deletion – Cost: $21

The data’s journey is not complete because any time a user opts their Firefox profile out of data collection we receive an order to delete what data we’ve previously received from that profile. To delete we need to copy out all the not-deleted data into new partitions and drop the old ones. This is a processing cost that is currently using the ad hoc $5/TB rate every time we process a batch of deletions (monthly).

Our Live Bookmarks count is adding 4 bytes of data per row that needs to be copied over. Each of those counts (excepting the ones that are deleted) needs to be copied over 25 times (retention period of 25 months). The amount of deleted data is small (Firefox’s data collection is very specifically designed to only collect what is necessary, so you shouldn’t ever feel as though you need to opt out and trigger deletion) so we’ll ignore its effect on the numbers for the purposes of making this easier to calculate.

12 GB/”month” x 14 “months” x 25 deletions / 1000GB/TB x 5 $/TB = $21

The total lifetime cost of all the deletion batches we process for the Live Bookmarks counts we record is $21. We’re hoping to knock this down a few pegs in cost, but it’ll probably remain in the “some dollars” order of magnitude.

The bigger share of this cost is actually in Storage, above. If we didn’t have to delete our data then, after 90 days, storage costs drop by half per month. This means that, if you want to assign the dollars a little more like blame, Storage costs are “only” $52.08 (full price for 3 months, half for 22) and Deletion costs are $52.92.

Grand Total: $245.67

In the best case, a collection of a single number from the wide Firefox user base will cost Mozilla almost $246 over the collection’s lifetime, split about 50% between labour and data computing platform costs.

So that’s it? Call it a wrap? Well… no. There are some cautionary tales to be learned here.

Lessons

0) Lean Data Practices save money. Our Data Collection Review Request form ensures that we aren’t adding these costs to Mozilla and our users without justifying that the collection is necessary. These practices were put into place to protect our users’ privacy, but they do an equally good job of reducing costs.

1) The simplest permanent data collection costs $228 its first year and $103 every year afterwards even if you never look at it again. It costs $25 (30min) to expire a collection, which pays for itself in a maximum of 2.9 months (the payback period is much shorter if the data collection is bigger than 4B (like a Histogram) because the yearly costs are higher). The best time to have expired that collection was ages ago: the second-best time is now.

2) Spending extra time thinking about a data collection saves you time and money. Even if you uplift a quick expiry patch for a mis-measured collection, the nature of Firefox releases is such that you would still end up paying nearly all of the same $245.67 for a useless collection as you would for a correct one. Spend the time ahead of time to save the expense. Especially for permanent collections.

3) Even small improvements in documentation, process, and tooling will result in large savings. Half of this cost is labour, and lesson #2 is recommending you spend more time on it. Good documentation enables good decisions to be made confidently. Process protects you from collecting the wrong thing. Tooling catches mistakes before they make their way out into the wild. Even small things like consistent naming and language will save time and protect you from mistakes. These are your force multipliers.

4) To reduce costs, efficient data representations matter, and quickly-expiring data collections matter more.

5) Retention periods should be set as short as possible. You shouldn’t have to store Live Bookmarks counts from 2+ years ago.

Where Does Glean Fit In

Glean‘s focus on high-level metric types, end-to-end-testable data collections, and consistent naming makes mistakes in instrumentation easier to find during development. Rather than waiting for instrumentation code to reach release before realizing it isn’t correct, Glean is designed to help you catch those errors earlier.

Also, Glean’s use of per-application identifiers and emphasis on custom pings allows for data segregation that allows for different retention periods per-application or per-feature (e.g. the “metrics” ping might not need to be retained for 25 months even if the “baseline” ping does. And Firefox Desktop’s retention periods could be configured to be of a different length than Firefox Lockwise‘s) and reduces data scanned per analysis. And a consistent ping format and continued involvement of Data Science through design and development reduces analyst labour costs.

Basically the only thing we didn’t address was efficient data transfer encodings, and since Glean controls its ping format as an internal detail (unlike Telemetry) we could decide to address that later on without troubling Product Developers or Data Science.

There’s no doubt more we could do (and if you come up with something, do let us know!), but already I’m confident Glean will be worth its weight in Canadian Dollars.

:chutten

(( Special thanks to :jason and :mreid for helping me nail down costs for the pipeline pieces and for the broader audience of Data Engineers, Data Scientists, Telemetry Engineers, and other folks who reviewed the draft. ))

(( This is a syndicated copy of the original post. ))