(“This Week in Glean” is a series of blog posts that the Glean Team at Mozilla uses to communicate better about our work. They could be release notes, documentation, hopes, dreams, or whatever: so long as Glean inspires it. You can find an index of all TWiG posts online.)

Data ingestion is a process that involves decompressing, validating, and transforming millions of documents every hour. The schemas of data coming into our systems are ever-evolving, sometimes causing partial outages of data availability when the conditions are ripe. Once the outage has been resolved, we run a backfill to fill in the gaps for all the missing data. In this post, I’ll discuss the error discovery and recovery processes through a recent bug.

Catching and fixing the error

Every Monday, a group of data engineers pours over a set of dashboards and plots indicating data ingestion health. On 2020-08-04, we filed a bug where we observed an elevated rate of schema validation errors coming from environment/system/gfx/adapters/N/GPUActive. For mistakes like these that are small fractions of our overall volume, partial outages are typically not urgent (as in not “we need to drop everything right now and resolve this stat!” critical). We called the subject experts and found out that the code responsible for reporting multiple GPUs in the environment had changed.

An intern reached out to me about a DNS study running a few weeks after filing the bug about GPUActive. I helped figure out that his external monitor setup with his Macbook was causing rejections like the ones that we had seen weeks before. One PR and one deploy later, I watched the error rates for the GPUActive field abruptly drop to zero.

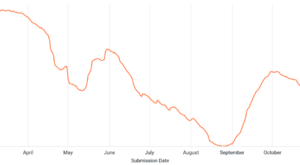

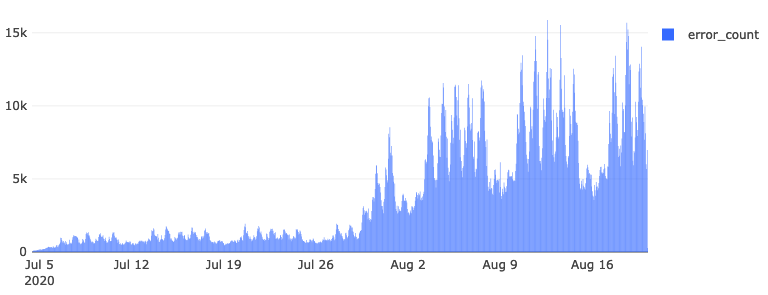

Figure: Error counts for environment/system/gfx/adapters/N/GPUActive

The schema’s misspecification resulted in 4.1 million documents between 2020-07-04 and 2020-08-20 to be sent to our error stream, awaiting reprocessing.

Running a backfill

In January of 2021, we ran the backfill of the GPUActive rejects. First, we determined the backfill range by querying the relevant error table:

SELECT DATE(submission_timestamp) AS dt, COUNT(*)FROM `moz-fx-data-shared-prod.payload_bytes_error.telemetry`WHERE submission_timestamp < '2020-08-21' AND submission_timestamp > '2020-07-03' AND exception_class = 'org.everit.json.schema.ValidationException' AND error_message LIKE '%GPUActive%'GROUP BY 1ORDER BY 1 |

The query helped verify the date range of the errors and their counts: 2020-07-04 through 2020-08-20. The following tables were affected:

crashdnssec-study-v1eventfirst-shutdownheartbeatmainmodulesnew-profileupdatevoice |

We isolated the error documents into a backfill project named moz-fx-data-backfill-7 and mirrored our production BigQuery datasets and tables into it.

SELECT *FROM `moz-fx-data-shared-prod.payload_bytes_error.telemetry`WHERE DATE(submission_timestamp) BETWEEN "2020-07-04" AND "2020-08-20" AND exception_class = 'org.everit.json.schema.ValidationException' AND error_message LIKE '%GPUActive%' |

Then we ran a suitable Dataflow job to populate our tables using the same ingestion code as the production jobs. It took about 31 minutes to run to completion. We copied and deduplicated the data into a dataset that mirrored our production environment.

gcloud config set project moz-fx-data-backfill-7dates=$(python3 -c 'from datetime import datetime as dt, timedelta; start=dt.fromisoformat("2020-07-04"); end=dt.fromisoformat("2020-08-21"); days=(end-start).days; print(" ".join([(start + timedelta(i)).isoformat()[:10] for i in range(days)]))')./script/copy_deduplicate --project-id moz-fx-data-backfill-7 --dates $(echo $dates) |

This query took hours because it iterated over all tables for ~50 days, regardless of whether it contained data. Future backfills should probably remove empty tables before kicking off this script.

Now that tables were populated, we handled data deletion requests since the time of the initial error. A module named Shredder serves the self-service deletion requests in BigQuery ETL. We ran Shredder from the bigquery-etl root.

script/shredder_delete --billing-projects moz-fx-data-backfill-7 --source-project moz-fx-data-shared-prod --target-project moz-fx-data-backfill-7 --start_date 2020-06-01 --only 'telemetry_stable.*' --dry_run |

This removed relevant rows from our final tables.

INFO:root:Scanned 515495784 bytes and deleted 1280 rows from moz-fx-data-backfill-7.telemetry_stable.crash_v4INFO:root:Scanned 35301644397 bytes and deleted 45159 rows from moz-fx-data-backfill-7.telemetry_stable.event_v4INFO:root:Scanned 1059770786 bytes and deleted 169 rows from moz-fx-data-backfill-7.telemetry_stable.first_shutdown_v4INFO:root:Scanned 286322673 bytes and deleted 2 rows from moz-fx-data-backfill-7.telemetry_stable.heartbeat_v4INFO:root:Scanned 134028021311 bytes and deleted 13872 rows from moz-fx-data-backfill-7.telemetry_stable.main_v4INFO:root:Scanned 2795691020 bytes and deleted 1071 rows from moz-fx-data-backfill-7.telemetry_stable.modules_v4INFO:root:Scanned 302643221 bytes and deleted 163 rows from moz-fx-data-backfill-7.telemetry_stable.new_profile_v4INFO:root:Scanned 1245911143 bytes and deleted 6477 rows from moz-fx-data-backfill-7.telemetry_stable.update_v4INFO:root:Scanned 286924248 bytes and deleted 10 rows from moz-fx-data-backfill-7.telemetry_stable.voice_v4INFO:root:Scanned 175822424583 and deleted 68203 rows in total |

After this is all done, we append each of these tables to the production tables. Appends requires superuser permissions, so it was handed off to another engineer to finalize the deed. Afterward, we deleted the rows in the error table corresponding to the backfilled pings from the backfill-7 project.

DELETEFROM `moz-fx-data-shared-prod.payload_bytes_error.telemetry`WHERE DATE(submission_timestamp) BETWEEN "2020-07-04" AND "2020-08-20" AND exception_class = 'org.everit.json.schema.ValidationException' AND error_message LIKE '%GPUActive%' |

Finally, we updated the production errors with new errors generated from the backfill process.

bq cp --append_table moz-fx-data-backfill-7:payload_bytes_error.telemetry moz-fx-data-shared-prod:payload_bytes_error.telemetry |

Now those rejected pings are available for analysis down the line. For the unadulterated backfill logs, see this PR to bigquery-backfill.

Conclusions

No system is perfect, but the processes we have in place allow us to systematically understand the surface area of issues and systematically address failures. Our health check meeting improves our situational awareness of changes upstream in applications like Firefox, while our backfill logs in bigquery-backfill allow us to practice dealing with the complexities of recovering from partial outages. These underlying processes and systems are the same ones that facilitate the broader Glean ecosystem at Mozilla and will continue to exist as long as the data flows.