While it’s seemingly been quiet on the website optimization front, we’ve been very busy behind the scenes. Over the last few months, Laura Mesa has coordinated the design of 5 A/B tests that we’re now in the process of implementing (3 on the First Run page and 2 on the IE download page).

Additionally, we’ve expanded the scope of our testing efforts. Look for tests to go live on support.mozilla.com and addons.mozilla.org this week!

In the remainder of today’s post, I’ll discuss a few experiment results and share our website optimizations plans.

Experiment Results

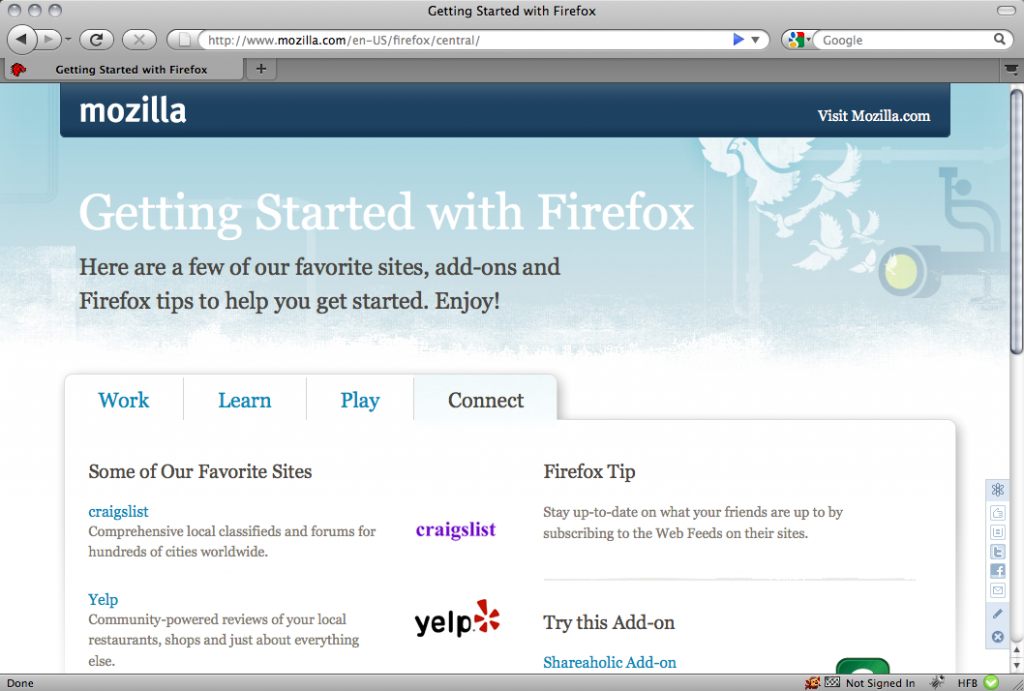

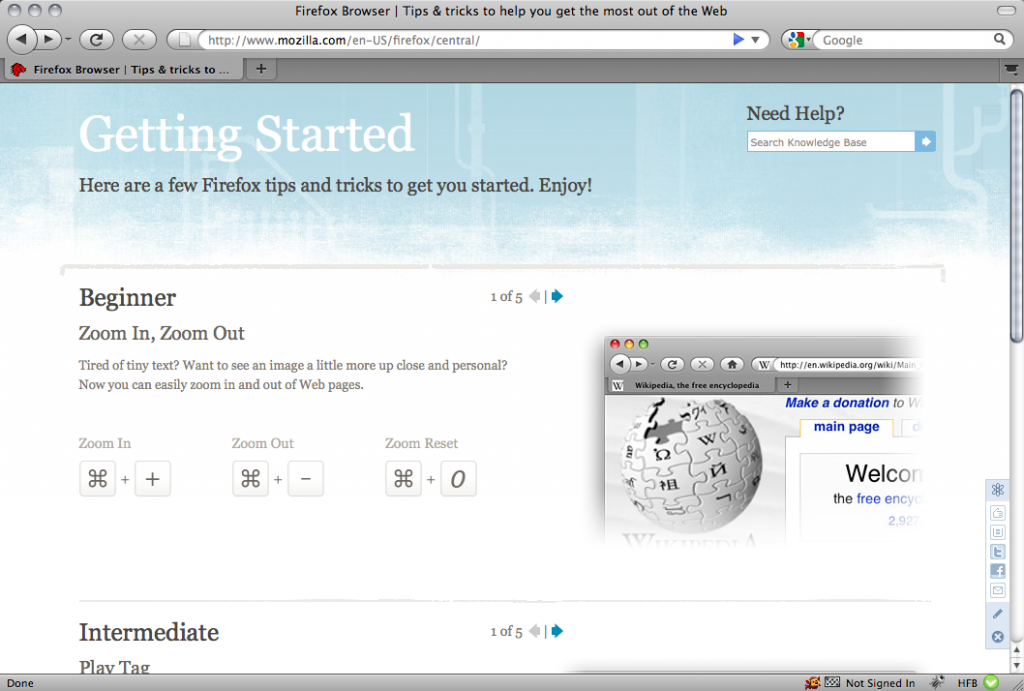

Getting Started

In this test, the Marketing team wanted to determine how adding Firefox tips and tricks to the Getting Started page would affect user behavior.

Unfortunately, after running an A/B test, we didn’t see any improvement in visits per user, pageviews per visit, average time per visits, or bounce rate. For the most part, the differences were minor. The only statistically significant decrease (at the 99% confidence interval) was in visits per user.

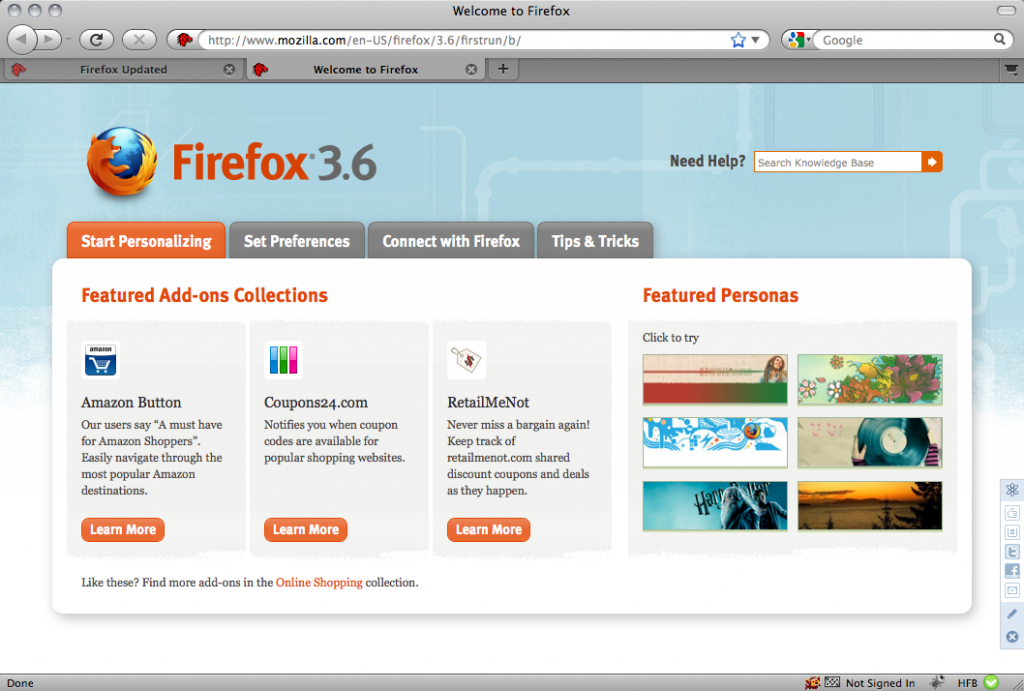

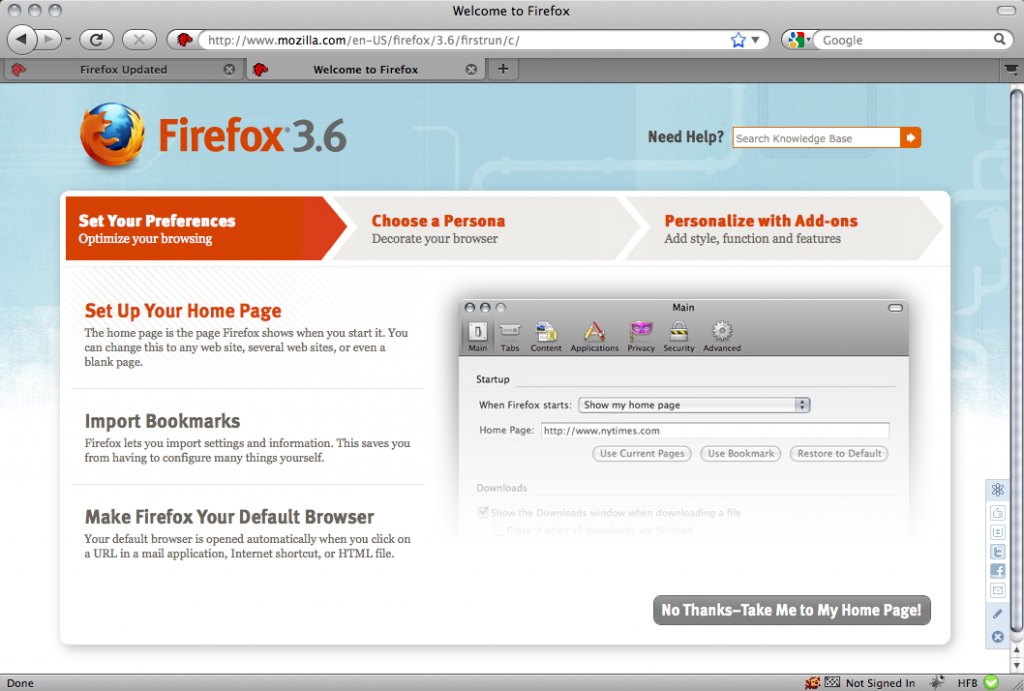

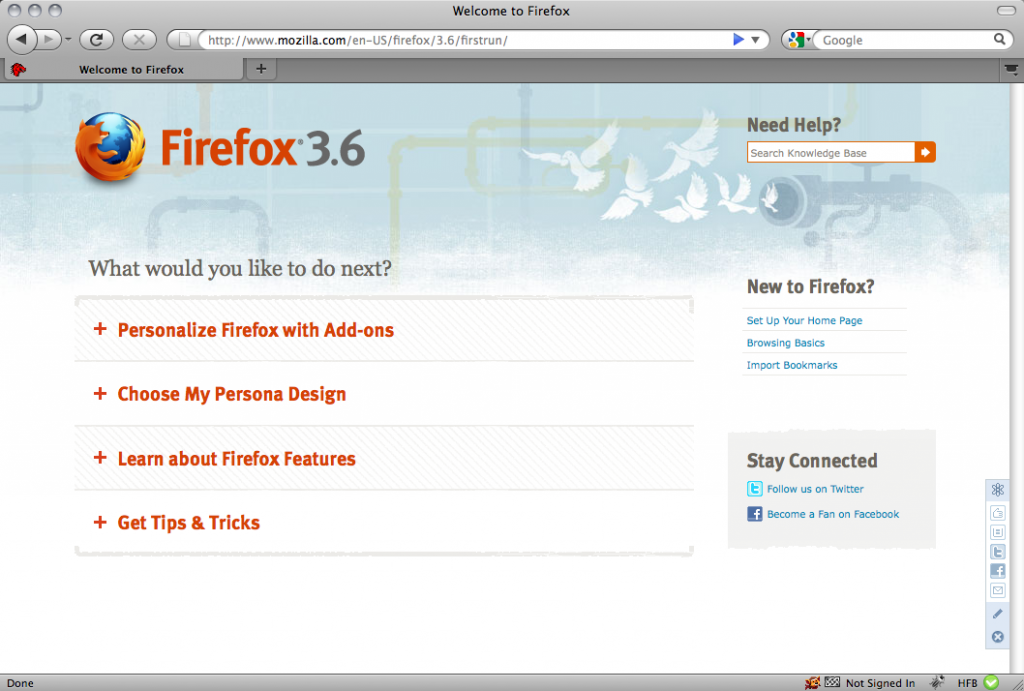

First Run Design #1

In the first of 3 A/B tests on the First Run page (bottom image), 6.3% more users interacted with the page and total interactions increased 82.4%. The early results are encouraging, but we need to run the test longer to achieve statistical significance.

Note that the design with more interactions per user isn’t necessarily better. Our final analysis will focus on specific outcomes (i.e. Personas installed and clicks on the “stay connected” buttons).

StumbleUpon Promotion

For this test, we hypothesized that promoting a specific Add-on would be more effective than promoting Add-ons generally. Currently, both the experimental variation and control promotions have a .7% click through rate.

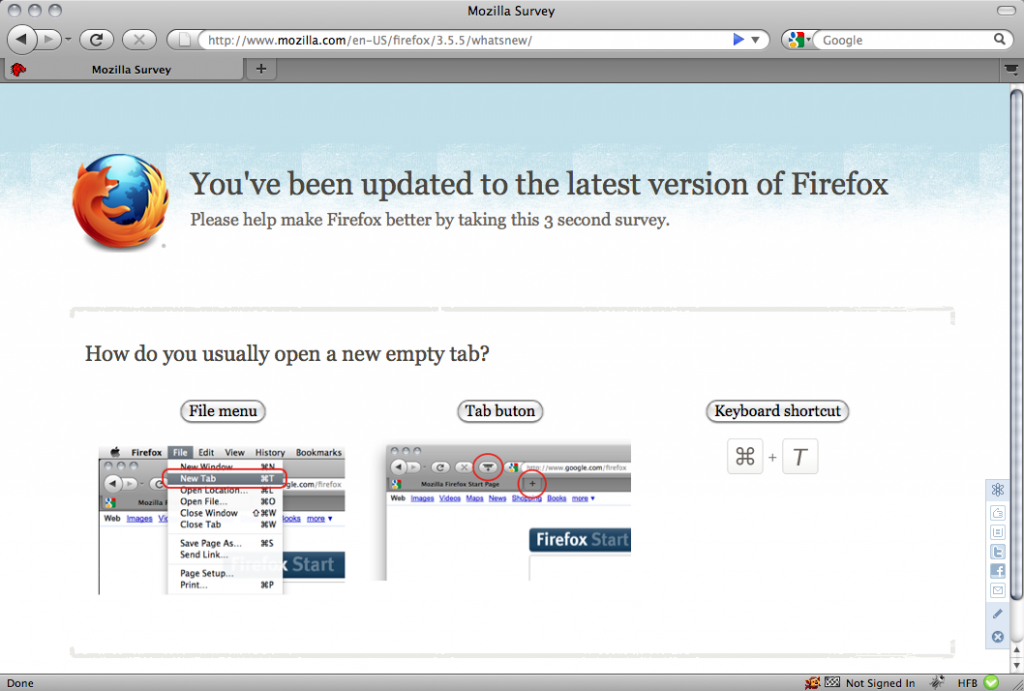

Surveys

In addition to running tests, we used our (highly recommended) website optimization tool to run two simple surveys. With the first, we learned that 47.8% of users open new empty tabs from the new tab button, 30.4% open from the file menu, and 21.8% open from a keyboard shortcut.

With the second, we learned that 46.9% of First Run visitors are first time users, 8.5% used Firefox for under 1 year, and 44.6% used Firefox for over 1 year. The response rate for both surveys exceeded 15%.

Have an idea for a survey you’d like to run on mozilla.com? Let us know in the comments!

Upcoming Tests

Two Additional First Run Designs

We will launch 2 additional A/B tests on the First Run page. Both use tab oriented designs.

IE download page

We will test at least 4 new designs for the the IE download page. We are still in the design phase, but expect to push the first experiments live next week.

IE Multivariate Test

In addition to testing entirely new designs, we want to understand which current design elements are most effective. Accordingly, we will run a multivariate test, switching in and out 4 page elements. Look for a blog post discussing the results soon.

AMO Landing Page

In our first addons.mozilla.org test, we will measure how the featured Add-ons promotion affects bounce rate and Add-ons installed per visit.

SUMO Landing Page

The SUMO team wants to know whether we should reword article titles as questions. Our first SUMO test will do just that.

In addition to running these tests, we plan to test the download confirmation page and run 3 A/B tests on the Update page.

Many thanks go out to Laura, John Slater, Steven Garrity, Royal Order, and everyone else involved in this process! Next up, I’ll suggest a streamlined process for proposing and running tests.

Dwayne Bailey wrote on

:

wrote on

:

John Slater wrote on

:

wrote on

:

Tony Mechelynck wrote on

:

wrote on

:

Blake Cutler wrote on

:

wrote on

:

atlanticoptimize wrote on

:

wrote on

:

David Tenser wrote on

:

wrote on

:

Rex Dixon wrote on

:

wrote on

:

Vuvuzelas wrote on

:

wrote on

:

Karthikeyan Ramnath wrote on

:

wrote on

:

Blake Cutler wrote on

:

wrote on

: