mozilla-inbound is currently approval-only due to issues with Windows PGO builds. The short explanation is that we turn on aggressive code optimization for our Windows builds. This aggressive code optimization causes the linker than comes with Visual Studio to run out of virtual memory. The current situation is especially problematic because we can’t increase the amount of virtual memory the linker can access (unlike last time, where we “just” moved the builds to 64-bit machines).

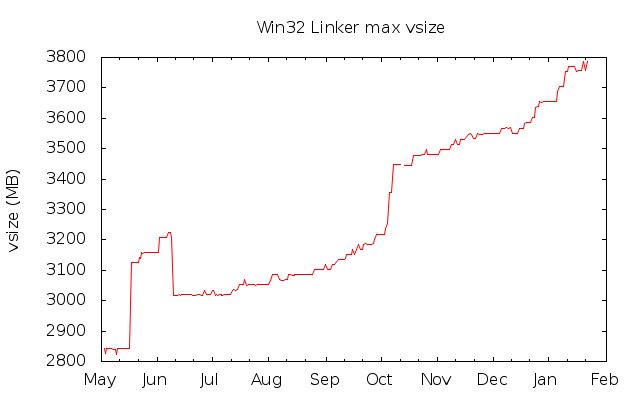

We don’t really have a good handle on what causes these issues (other than the obvious “more code”), but at least we are tracking the linker’s vsize and we’ll soon have pretty pictures of the same. We hadn’t expected to have to deal with this problem for several more months. The graph below helps explain why we’re hitting this problem a little sooner than before. The data for this graph was taken from the Windows nightly build logs.

Notice the massive spike in October, as well as the ~100MB worth of growth in early January. While the data is not especially fine-grained (nightly builds can include tens of changesets, and we’d really like information on the vsize growth on a per-changeset basis), looking at the biggest increases over the last ten months might prove helpful. There have been ~300 nightly builds since we started recording data; below is a list of the top 20 daily increases in linker max vsize. The date in the table is the date the nightly build was done; the newly-included changeset range is linked to for your perusal.

| Nightly build date | vsize increase (MB) |

|---|---|

| 2012-05-18 | 282.363281 |

| 2012-10-06 | 103.609375 |

| 2012-10-08 | 90.769531 |

| 2013-01-10 | 49.699219 |

| 2012-06-02 | 49.199219 |

| 2012-10-19 | 32.976562 |

| 2012-12-25 | 32.332031 |

| 2013-01-06 | 32.015625 |

| 2013-01-20 | 30.144531 |

| 2013-01-22 | 27.222656 |

| 2012-10-04 | 19.273438 |

| 2012-05-10 | 18.234375 |

| 2012-11-23 | 17.937500 |

| 2012-08-03 | 17.738281 |

| 2013-01-07 | 17.671875 |

| 2012-09-08 | 17.386719 |

| 2012-12-23 | 17.269531 |

| 2012-12-27 | 17.156250 |

| 2012-11-11 | 17.085938 |

| 2012-12-06 | 17.003906 |

Mike Hommey suggested that trying to divine the whys and hows of extra memory usage would be a fruitless endeavor. Looking at the above pushlogs, I am inclined to agree with him. There’s nothing in any of them that jumps out. I didn’t try clicking through to individual changesets to figure out what might have added large chunks of code, though.

What’s interesting is the drop in June.

The drop in June corresponds to http://hg.mozilla.org/mozilla-central/pushloghtml?fromchange=95d1bb200f4e15f340abb59a883713a2edc55861&tochange=dc410944aabcab8ff2825d7e8d3f3391e3fe5876

Which is not very helpful, because nothing jumps out as shouting that “I made vsize go down by 200MB!”

I wouldn’t be surprised if http://hg.mozilla.org/mozilla-central/rev/ae0b2ba1e47e participates greatly in the 2012-05-18 increase.

What’s the rationale for not splitting the catch-all XUL.dll into multiple libraries (since linking xul.dll is what causes the linker to run out of address space AIU)?

It’s faster to have as much stuff as you can packed into a single library; inter-library calls are relatively more expensive than intra-library calls. I think there might also be some disk I/O wins from having all the code in a single file (don’t have to seek around the disk to get to multiple files at startup).

Your suggestion has been done in the past to get the linker’s required memory down to something manageable. We can now turn on aggressive optimizations on a per-directory basis in the source tree, and that’s somewhat easier than moving things out of libxul. There may come another point where we have to move code out of libxul again, though.

> There’s nothing in any of them that jumps out.

Wouldn’t this be something for a bisect?

You could bisect it, sure. It’d take a couple of days to do so, though.

The topics on Mozillazine with theses days are:

http://forums.mozillazine.org/viewtopic.php?f=23&t=2564537 (10-05)

http://forums.mozillazine.org/viewtopic.php?f=23&t=2565929 (10-06)

http://forums.mozillazine.org/viewtopic.php?f=23&t=2566595 (10-07)

http://forums.mozillazine.org/viewtopic.php?f=23&t=2567657 (10-08)

Maybe the name of the bugs can help to find those that added a lot of code?

The early October jump was the remainder of webrtc landing in m-c from alder (signaling in particular (>200Klines), also mtransport, datachannels I think and other pieces)

Is the non-presence of a webrtc merge in pushlog merely an instance of pushlog brokenness, then? I see the strip commits, which I remember from the webrtc landing, but I don’t see anything associated with the landing itself.