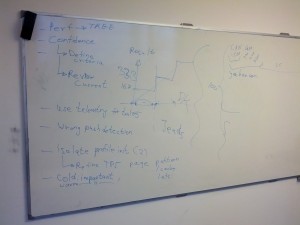

Inspired by Avi’s recent work on the Talos tscoll test, we had an extended discussion about things to address in Talos tests. I took a picture of the whiteboard for posterity:

Concerns driving our discussion:

- Lack of confidence in Talos. Whether through one too many dev.tree-management emails or inconsistent results on developer machines vs. Try, people don’t have confidence in Talos providing consistent, understandable results.

- Wrong pushes identified as the cause of performance regressions. We’ve covered this topic before. Some people had a hard time believing this happens. It shouldn’t, and we’d like to fix it.

Some ways to address those concerns:

- Cold cases are just as important as warm cases. On some tests, we might discount the numbers from the first few runs because they aren’t as smooth as the numbers from subsequent runs; we do this for our pageloader tests, for instance. The differences come from things being initialized lazily, caches of various kinds filling up, and so forth. But those “cold” cases are just as important as “warm” cases: In scenarios where the user restarts the web browser repeatedly throughout the day or memory pressure causes the browser to be killed on mobile devices, necessitating restarts when switching to it, the cold cases are the only ones that matter. We shouldn’t be discounting those numbers. Maybe the first N runs should constitute an entirely separate test: Tp5cold or similar. Then we can compare numbers across cold tests, which should provide more insight than comparing cold vs. warm tests.

- Criteria for performance tests. We have extensive documentation on the tests themselves. The documentation on the what that’s being measured, the how the test goes about fulfilling that goal, and the why one should believe that goal is actually achieved is in short supply. As shown above, tscroll doesn’t measure the right things. We don’t know if tscroll is an isolated incident or symptomatic of a larger problem. We’d like to find out.

- In-tree performance tests. Whether recording frames during an animation and comparing them to some known quantity, measuring overdraws on the UI, or verifying telemetry numbers collected during a test, this would help alleviate difficulties with running the tests. Presumably, it would also make adding new, focused tests easier. And the tools for comparing performance tests would also exist in the tree so that comparing test results would be a snap.

Better real-world testing

- . Tp5 was held up as a reasonable performance test. Tp5 iterates over “the top 100 pages of the internet”, loading each one 25 times and measuring page load times before moving on to the next one. Hurrah for

testing the browser on real-world data

- rather than

- . But is this necessarily the best way to conduct a test with real-world data? Doesn’t sound very reflective of real-world usage. What about loading 10 pages simultaneously, as one might do clicking through morning news links? Why load each page 25 times? Why not hold some pages open while browsing to other ones (though admittedly without network access this may not matter very much)? Tp5 is always run on a clean profile; clean profiles are not indicative of real-world performance (similarly for disk caches). And so on.

Any one of these things is a decent-sized project on its own. Avi and I are tackling the criteria for performance tests bit first by reviewing all the existing tests, trying to figure out what they’re measuring, and comparing that with what they purport to measure.

“Wrong pushes identified as the cause of performance regressions. We’ve covered this topic before. Some people had a hard time believing this happens. It shouldn’t, and we’d like to fix it.”

Just to clarify – the script that sends the emails to dev.tree-management isn’t actually part of Talos itself & is due for EOL soon, once Datazilla is rolled out.

And by rolled out, I mean has the last few bugs fixed.

What’s the replacement for that script, then?

AIUI, tp5 excludes all the external stuff like Google/Facebook/Twitter widgets and buttons, so it’s not as representative of those top 100 sites as you might think. It’s still definitely better than synthetic benchmarks, though.

Hi Nathan,

“Cold cases are just as important as warm cases.”

I think that’s not the best way to look at it. Cold startup is important to measure because actual users experience it and it’s a pain point. In the case of the tp tests, measuring the performance of a page load *after* it’s been loaded several times correlates with almost no real-world user situation. Basically it’s a test of browser performance in the somewhat rare “fully-cached” state. It’s not warm, it’s red-hot. It’ll still catch some regressions in code that doesn’t rely on caching to mitigate performance.

Still sounds okay, right? We have a test that catches regressions, that sounds good. However, there’s a big catch here. Due to the use of repeated page loads, the test relies on situations where cache hit rates are relatively high compared to normal usage. Anything that speeds up the uncached case but ever so slightly decreases the cache hit ratio will cause the tp numbers to increase. So what would have been a win for actual users can’t land because it causes the tp values to regress. Conversely, I can check in a change in low-level code for which the result is cached that will double page load time in some cases but it will have *no* impact on the tp numbers because the use of the cached result is all that’s measured by this test.

See bug 834609 for more details of the sort of problem to which I’m referring.