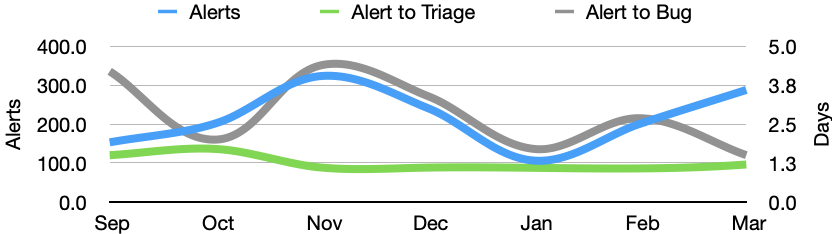

In March there were 288 alerts generated, resulting in 28 regression bugs being filed on average 4 days after the regressing change landed.

Welcome to the March 2021 edition of the performance sheriffing newsletter. Here you’ll find the usual summary of our sheriffing efficiency metrics, followed by some analysis on the data footprint of our performance metrics. If you’re interested (and if you have access) you can view the full dashboard.

Sheriffing efficiency

- All alerts were triaged in an average of 1.2 days

- 92% of alerts were triaged within 3 days

- Valid regressions were associated with bugs in an average of 1.5 days

- 96% of valid regressions were associated with bugs within 5 days

Interestingly, the close correlation we’ve seen between alerts and time to bug did not continue into March. It’s not clear why this might be, however there were some temporary adjustments to the sheriffing team during this time. We also saw an increase in the percentage of alert summaries that marked as invalid, which might have an impact of our sheriffing efficiency.

What’s new in Perfherder?

I last provided an update on Perfherder in July 2020 so felt it was about time to revisit.

Compact Bugzilla summaries & descriptions

Until recently, Perfherder would simply try to include all affected tests and platforms in the summary and description for all regression bugs. Not only does this make the bugs difficult to read, it also meant we hit the maximum field size for regressions that impacted a large number of tests.

Bug 1697112 is an example of how this looked before the recent change. The description contained 24 regression alerts, and 22 improvement alerts. The summary was edited by a performance sheriff to fit within the maximum field size:

4.55 – 18.83% apple ContentfulSpeedIndex … tumblr SpeedIndex (windows10-64-shippable) regression on push 6ea4d69aa5c6c7064d3b4a195bf96617baa3aebf (Thu March 4 2021)

With the recent improvements we limit how many tests are named in the summary and show a count of the omitted tests. We now list common names for the affected platforms, and no longer include the suspected commit hash. For the description, when we have many alerts we now show the most/least affected and indicate that one or more have been omitted for display purposes. Bug 1706333 is an example ofthe improved description and summary:

122.22 – 2.73% cnn-ampstories FirstVisualChange / ebay ContentfulSpeedIndex + 22 more (Windows) regression on Fri April 16 2021

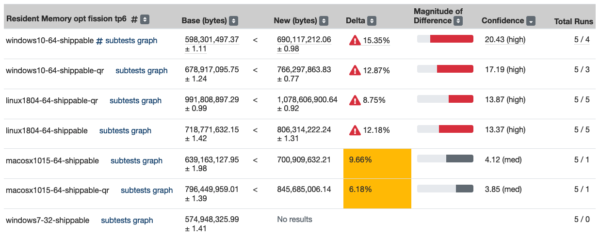

Compare view sorting

We’ve added the ability to sort columns in compare view. This is useful when you’re comparing many tests and you’d like to quickly sort the results by confidence, delta, or magnitude.

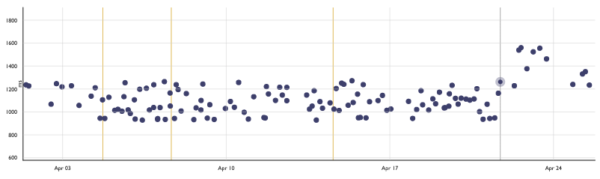

Infrastructure changelog

Last year we created a unified changelog consolidating commits from repositories related to our automation infrastructure. Changes to infrastructure can impact our performance results and time can be wasted investigating regressions in our products that aren’t there. To help with this, we now annotate Perfherder graphs with data from the infrastructure changelog. When one of these markers correlates to an alert it can provide a valuable clue for our sheriffs. The repositories monitored for changes can be found here here.

Stop alerting on tier 3 jobs

After updating our Performance Regressions Policy to explicitly mention that the sheriffs do not monitor tier 3 jobs, we fixed Perfherder to prevent these from alerting. Anything running below tier 2 is considered unstable, and not a valuable performance indicator.

Reduced data footprint

We have also spent a lot of effort reducing the data footprint of our performance data by updating and enforcing our data retention policy. You can read more about our data footprint in last month’s newsletter.

Email reports

When working on our data retention policy we wanted some way of reporting the signatures that were being deleted, and so we introduced email reports. We’re also now sending reports for automated backfills, and in the future we’d like to generate more reports. If you’re curious, these are being sent to perftest-alerts.

Bug fixes

The following bug fixes are also worth highlighting:

Acknowledgements

These updates would not have been possible without Ionuț Goldan, Alexandru Irimovici, Alexandru Ionescu, Andra Esanu, Beatrice Acasandrei and Florin Strugariu. Thanks also to the Treeherder team for reviewing patches and supporting these contributions to the project. Finally, thank you to all of the Firefox engineers for all of your bug reports and feedback on Perfherder and the performance workflow. Keep it coming, and we look forward to sharing more updates with you all soon.

Summary of alerts

Each month I’ll highlight the regressions and improvements found.

- 😍 20 bugs were associated with improvements

- 🤐 6 regressions were accepted

- 🤩 9 regressions were fixed (or backed out)

- 🤥 0 regressions were invalid

- 🤗 0 regressions are assigned

- 😨 3 regressions are still open

- 😵 1 regression was reopened

Note that whilst I usually allow one week to pass before generating the report, there are still alerts under investigation for the period covered in this article. This means that whilst I believe these metrics to be accurate at the time of writing, some of them may change over time.

I would love to hear your feedback on this article, the queries, the dashboard, or anything else related to performance sheriffing or performance testing. You can comment here, or find the team on Matrix in #perftest or #perfsheriffs.

The dashboard for March can be found here (for those with access).

No comments yet

Comments are closed, but trackbacks are open.