I had a brilliant idea! How do I get stakeholders to understand whether the market sees it in the same way?

People in startups have tried so hard to avoid spending time and money on building a product that doesn’t achieve the product/ market fit, so do tech companies. Resources are always limited. Making right decisions on where to put their resources are serious in organizations, and sometimes, it’s even harder to make one than in a startup.

ChecknShare, an experimental product idea from Mozilla Taipei for improving Taiwanese seniors’ online sharing experience, has learned a lot after doing several rounds of validations. In our retrospective meeting, we found the process can be polished to be more efficient when we both validate our ideas and communicate with our stakeholders at the same time.

Here are 3 steps that I suggest for validating your idea:

Step 1: Define hypotheses with stakeholders

Having hypotheses in the planning stage is essential, but never forget to include stakeholders when making your beautiful list of hypotheses. Share your product ideas with stakeholders, and ask them if they have any questions. Take their questions into consideration to plan for a method which can cover them all.

Your stakeholders might be too busy to participate in the process of defining the hypotheses. It’s understandable, you just need to be sure they all agree on the hypotheses before you start validating.

Step 2: Identify the purpose of validating your idea

Are you just trying to get some feedback for further iteration? Or do you need to show some results to your stakeholders in order to get some engagement/ resources from them? The purpose might influence how you select the validation methods.

There are two types of validation methods, qualitative and quantitative. Quantitative methods focus on finding “what the results look like”, while qualitative methods focus on “why/ how these results came about”. If you’re trying to get some insights for design iteration, knowing “why users have trouble falling in love with your idea” could be your first priority in the validation stage. Nevertheless, things might be different when you’re trying to get your stakeholders to agree.

From the path that ChecknShare has gone through, quantitative results were much easier to influence stakeholders as concrete numbers were interpreted as a representation of a real world situation. I’m not saying quantitative methods are “must-dos” during the validation stage, but be sure to select a method that speaks your stakeholders’ language.

Step 3: Select validation methods that validate the hypotheses precisely

With the hypotheses that were acknowledged by your stakeholders and the purpose behind the validation, you can select methods wisely without wasting time on inconsequential work.

In the following, I’m going to introduce the 5 validation methods that we conducted for ChecknShare and the lessons we’ve learned from each of them. I hope these shared lessons can help you find your perfect one. Starting with the qualitative methods:

Qualitative Validation Methods

1. Participatory Workshop

The participatory workshop was an approach for us to validate the initial ideas generated from the design sprint. During the co-design process, we had 6 participants who matched with our target user criteria. We prioritized the scenario, got first-hand feedback for the ideas, and did quick iterations with our participants. (For more details on how we hosted the workshop, please look at the blog I wrote previously.)

Although hosting a workshop externally can be challenging due to some logistic works like recruiting relevant participants and finding a large space for accommodating people, we see participatory workshop as a fast and effective approach for having early interactions with our target users.

2. Physical pitching survey

In order to see how our target market reacts to the idea in the early stage, we hosted a pitching session in a local learning center that offered free courses for seniors to learn how to use smartphones. During the pitching session, we handed out paper questionnaires to investigate their smartphone behaviors, interests of the idea, and their willingness to participate in our future user testings.

The pitching session in a local learning center

It was our first time experimenting with a physical survey instead of sitting in the office and deploying surveys through virtual platforms. A physical survey isn’t the best approach to get a massive number of responses in a short time. However, we got a chance to talk to real people, saw their emotional expressions when pitching an idea, recruited user testing participants, and pilot tested a potential channel for our future go-to-market strategy.

Moreover, we invited our stakeholders to attend the pitching session. It provided a chance for them to be immersed in the environment and feel more empathy around our target users. The priceless experience made our post conversations with stakeholders more realistic when we were evaluating the risk and potential of our target users who the team wasn’t quite familiar with.

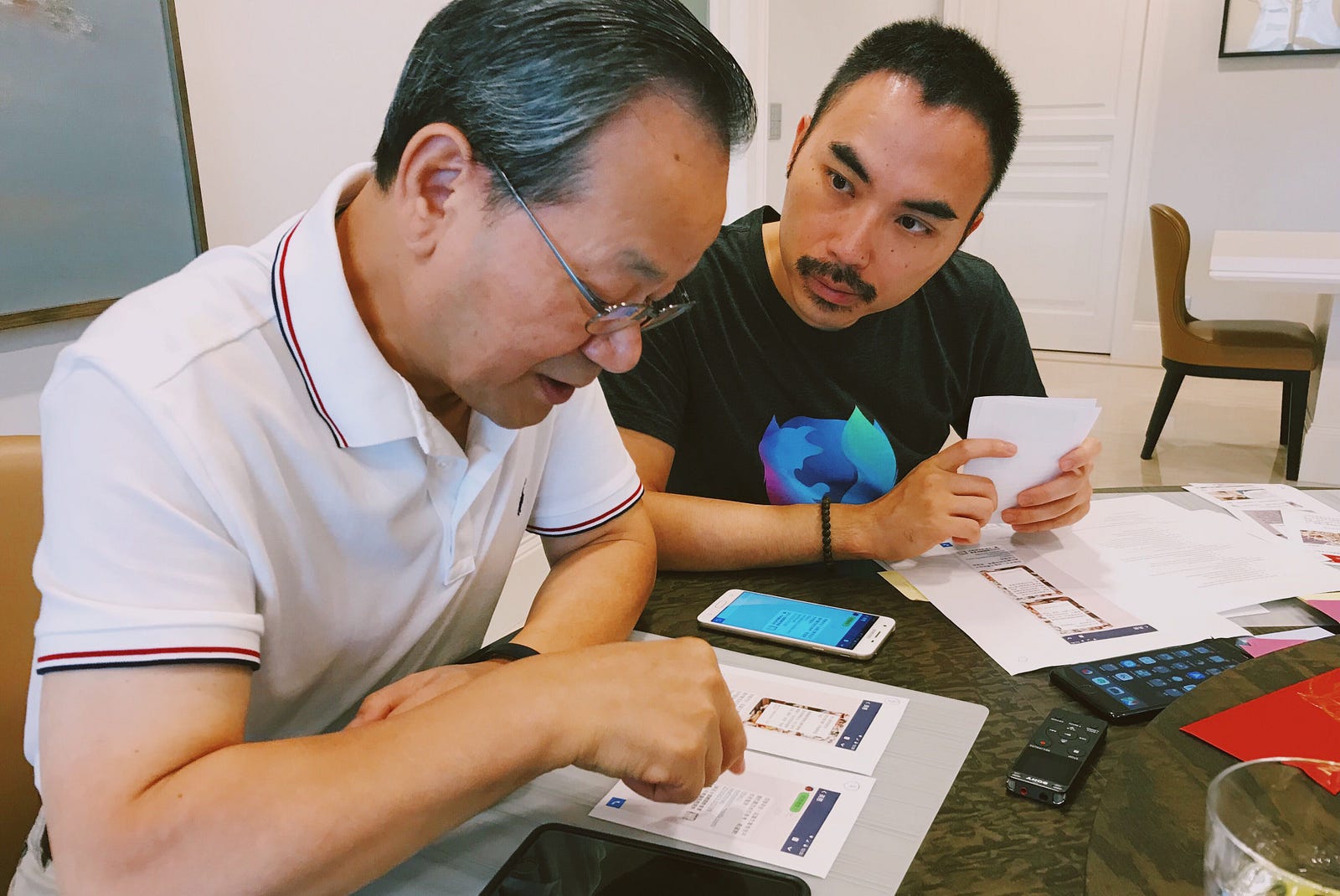

Our stakeholders were chatting with seniors during the pitching session

3. User Testing

During user testing, we were focusing on the satisfaction level of the product features and the usability of the UI flow. For the usability testing, we provided several pairs of paper prototypes for A/B testing participants’ understanding of the copy and UI design, and an interactive prototype to see if they could accomplish the tasks we assigned. The feedback indicated the areas that needed to be tweaked in the following iteration.

A/B Testing the product feature by using paper prototypes

Quantitative Validation Methods

When we realized that qualitative results didn’t speak our stakeholders’ language, we started to recollect our stakeholders’ questions holistically and applied quantitative methods to answer them. Here are the following 2 methods we applied:

4. Online Survey

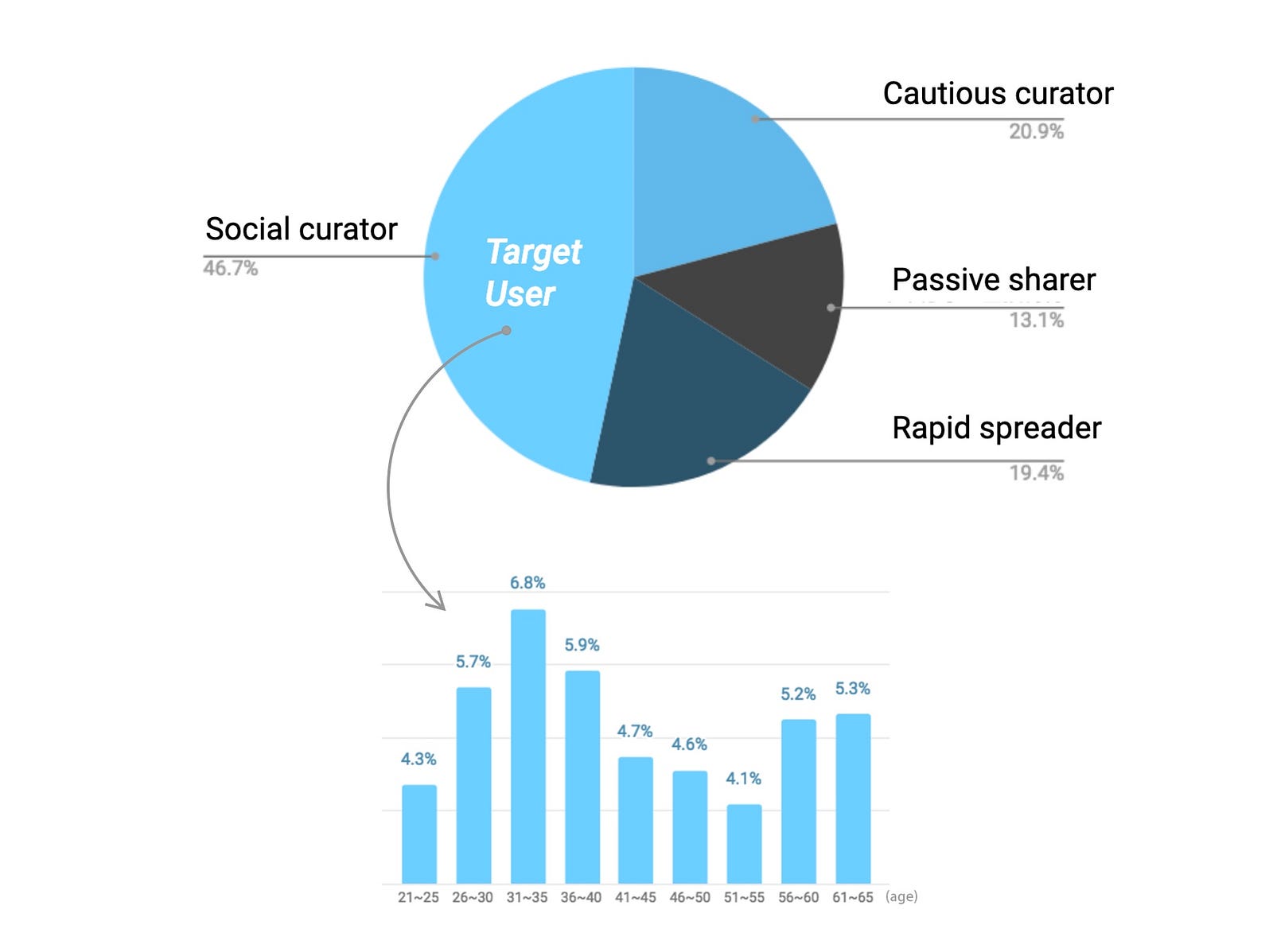

To understand the potential market size and the product value proposition which our stakeholders consider of great importance, we designed an online survey that investigated the current sharing behavior and the preference of the features among different ages. It helped us to see if there were any other user segments that were similar with seniors and the priority of the features.

The pie chart and bar chart reveal the portion of our target users

The EDM we sent out for spreading the online survey

The challenge of conducting an online survey is to find an efficient deployment channel with less bias. Since the age range of our target responses were quite wide (from age 21 to 65, 9 segments), conducting an online survey became time-consuming and was beyond our expectations. To get at least 50 responses from each age bracket, we delivered survey invitations through Mozilla Taiwan’s social account, sent out EDM by collaborating with our media partner, and also bought responses from Survey Monkey.

When we reviewed the entire survey results with our stakeholders, we had a constructive discussion and progressed on defining our target audience and the value proposition based on solid numbers. An online survey can be an easier approach if the survey scope uses a narrower age range. For making constructive discussions happen earlier, we’d suggest running a quick survey once the product concept is settled.

5. Landing Page Test

We couldn’t just use a survey to investigate a participant’s app download willingness since it’s very hard to avoid leading questions. Therefore, the team decided to run a landing page test and see how the real market reacted to the product concept. We designed a landing page which contained a key message, product introduction of the top 3 features, several CTA buttons for email signup, and a hidden email collecting section that only showed when a participant clicked on the CTA button. We intentionally kept the page structure similar to a common landing page. (Have no idea what a landing page test is? Scott McLeod published a thorough landing page test guide which might be very helpful for you :)) Along with the landing page, we had an Ad banner which is consistent with our landing page design.

We ran our ad on Google Display Network for 5 days and got 10x more visitors than the previous online survey responses, which is the largest number of participants compared to the other validations we conducted. The CTR and conversion rate was quite persuasive, so ChecknShare finally got support from our stakeholders and the team was able to start thinking about more details around design implementation.

Landing page test is uncommon in Taiwan’s software industry, not to mention testing product concepts for seniors. We weren’t quite confident with getting reliable results at the beginning, but it ended up reaching out to the most seniors we’ve never had in our long validation journey. Here I summarized some suggestions for running a landing page test:

- Set success criteria with stakeholders before running the test.

Finding a reasonable benchmark target is essential. There’s no such thing as an absolute number for setting a KPI because it can vary depending on the region, acquiring channels, and the product category. - Make sure your copy can deliver the key product values in 5–10 secs read.

The copy on both ad and landing page should be simple, clear, and touching. Simply pilot testing the copy with fresh eyes can be very insightful for copy iterations. - Reduce any factors that might influence the reading experience.

Don’t let the website design ruin your test results. Remember to check the accessibility of your website (especially text size and contrast ratio). Pairing comprehensible illustrations, UI screens or even some animation of the UI flow with your copy can be very helpful in making it easier to understand.

The endless quantitative-qualitative dilemma

“What if I don’t have sufficient time to do both qualitative and quantitative testing?” you might ask.

We believe that having both qualitative and quantitative results are important. One supports each other. If you don’t have time to do both, take a step back, talk with your stakeholders, and think about what are the most important criteria that have to be true for becoming a successful product.

There’s no perfect method to validate all types of hypotheses precisely. Keep asking yourself why you need to do this validation, and be creative.