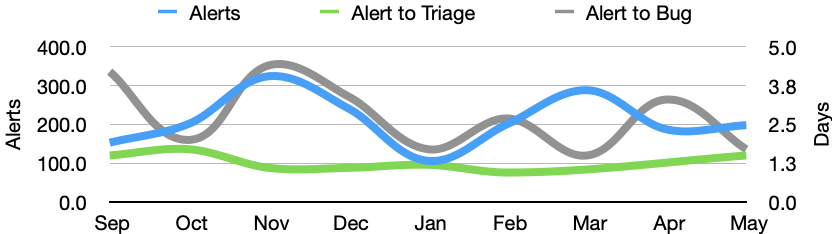

In May there were 198 alerts generated, resulting in 27 regression bugs being filed on average 4.5 days after the regressing change landed.

Welcome to the May 2021 edition of the performance sheriffing newsletter. Here you’ll find the usual summary of our sheriffing efficiency metrics, followed by an update on our migration to browsertime as our primary tool for browser automation. If you’re interested (and if you have access) you can view the full dashboard.

Sheriffing efficiency

- All alerts were triaged in an average of 1.5 days

- 91% of alerts were triaged within 3 days

- Valid regressions were associated with bugs in an average of 1.7 days

- 100% of valid regressions were associated with bugs within 5 days

In April we enabled automatic backfills for alerts for Linux and Windows. This means that whenever we generate an alert summary for these platforms, we now automatically trigger the affected tests against additional pushes. This is typically the first thing a sheriff will do when triaging an alert, and whilst it isn’t a time consuming task, the triggered jobs can take a while to run. By automating this, we increase the chance of our sheriffs having the additional context needed to identify the push that caused the alert at the time of triage.

If successful, automatic backfills should reduce the time between the alert being generated and the regression bug being opened. Whilst the results for May look promising, we have seen some fluctuation in this metric so I’ll hold off any celebrations for now.

Revisiting Browsertime

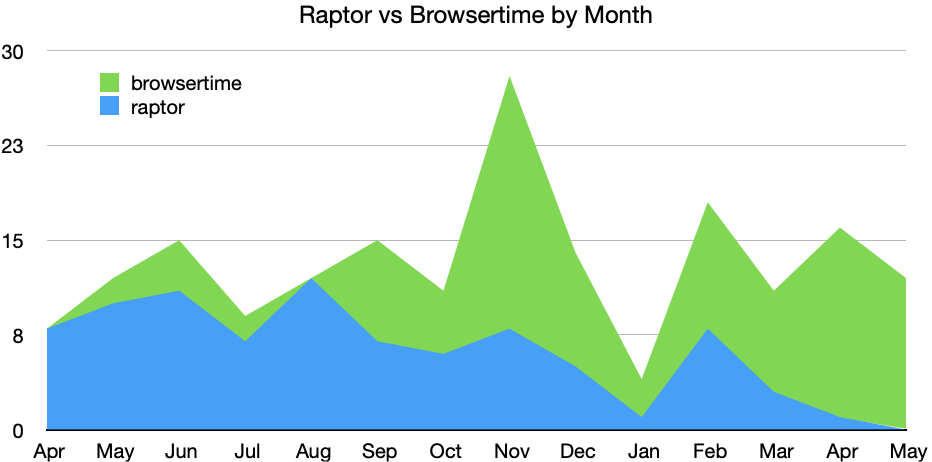

Back in November’s newsletter I shared our plans to finish migrating all of our page load tests away from our own web extension solution to the popular browsertime open source project. I’m happy to report that this was completed early in February, and has since been followed by migration of most of our benchmark tests to browsertime.

Looking back, we saw our first alert from tests using browsertime in May 2020, so it’s somewhat neat to see that one year on we have now migrated all of our sheriffed tests, and May 2021 was the first month where we recorded no alerts from the web extension. We still have a few remaining tests to migrate before we can remove all the legacy code from our test harness, but I anticipate this happening sometime this year.

Summary of alerts

Each month I’ll highlight the regressions and improvements found.

- 😍 13 bugs were associated with improvements

- 🤐 6 regressions were accepted

- 🤩 10 regressions were fixed (or backed out)

- 🤥 0 regressions were invalid

- 🤗 0 regressions are assigned

- 😨 8 regressions are still open

- 😵 0 regressions were reopened

Note that whilst I usually allow one week to pass before generating the report, there are still alerts under investigation for the period covered in this article. This means that whilst I believe these metrics to be accurate at the time of writing, some of them may change over time.

I would love to hear your feedback on this article, the queries, the dashboard, or anything else related to performance sheriffing or performance testing. You can comment here, or find the team on Matrix in #perftest or #perfsheriffs.

The dashboard for May can be found here (for those with access).

No comments yet

Comments are closed, but trackbacks are open.