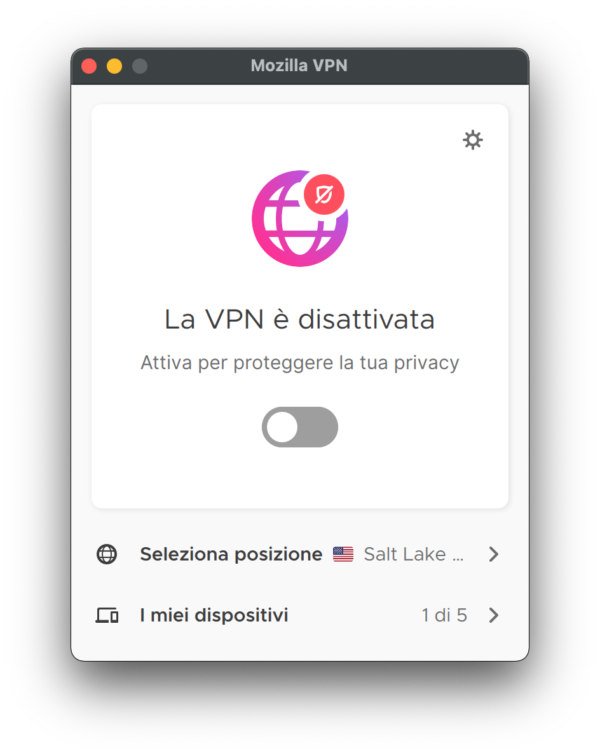

On April 28th, Mozilla successfully launched its VPN Client in two new countries: Germany and France. While the VPN Client has been available since 2020 in several countries (U.S., U.K., Canada, New Zealand, Singapore, and Malaysia), the user interface was only available in English.

This blog post describes the process and steps needed to make this type of product localizable within the Mozilla ecosystem.

How It Begins

Back in October 2020, the small team working on this project approached me with a request: we plan to do a complete rewrite of the existing VPN Client with Qt, using one codebase for all platforms, and we want to make it localizable. How can we make it happen?

First of all, let me stress how important it is for a team to reach out as early as possible. That allows us to understand existing limitations, explain what we can realistically support, and set clear expectations. It’s never fun to find yourself backed in a corner, late in the process and with deadlines approaching.

Initial Localization Setup

This specific project was definitely an interesting challenge, since we didn’t have any prior experience with Qt, and we needed to make sure the project could be supported in Pontoon, our internal Translation Management System (TMS).

The initial research showed that Qt natively uses an XML format (TS File), but that would have required resources to write a parser and a serializer for Pontoon. Luckily, Qt also supports import and export from a more common standard, XLIFF.

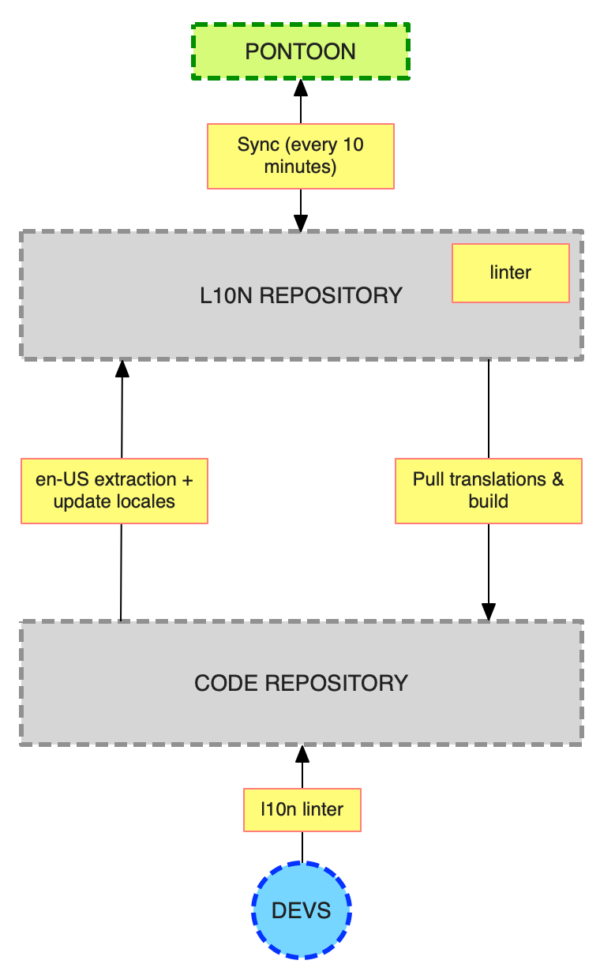

The next step is normally to decide how to structure the content: do we want the TMS to write directly in the main repository, or do we want to use an external repository exclusively for l10n? In this case, we opted for the latter, also considering that the main repository was still private at the time.

Once settled on the format and repository structure, the next step is to do a full review of the existing content:

- Check every string for potential localizability issues.

- Add comments where the content is ambiguous or there are variables replaced at run-time.

- Check consistency issues in the en-US content, in case the content hasn’t been reviewed or created by our very capable Content Team.

It’s useful to note that this process heavily depends on the Localization Project Manager assigned to a project, because there are different skill sets in the team. For example, I have a very hands-on approach, often writing patches directly to fix small issues like missing comments (that normally helps reducing the time needed for fixes).

In my case, this is the ideal approach:

- After review, set up the project in Pontoon as a private project (only accessible to admins).

- Actually translate the project into Italian. That allows me to verify that everything is correctly set up in Pontoon and, more importantly, it allows me to identify issues that I might have missed in the initial review. It’s amazing how differently your brain works when you’re just looking at content, and when you’re actually trying to translate it.

- Test a localized build of the product. In this way I can verify that we are able to use the output of our TMS, that the build system works as expected, and that there are no errors (hard-coded content, strings reused in different contexts, etc.).

This whole process typically requires at least a couple of weeks, depending on how many other projects are active at the same time.

Scale and Automate

I’m a huge fan of automation when it comes to getting rid of repetitive tasks, and I’ve come to learn a lot about GitHub Actions working on this project. Luckily, that knowledge helped in several other projects later on.

The first thing I noticed is that I was often commenting on two issues on the source (en-US) strings: typographic issues (straight quotes, 3 dots instead of ellipsis), lack of comments when a string has variables. So I wrote a very basic linter that runs in automation every time a developer adds new strings in a pull request.

The bulk of the automation lives in the l10n repository:

- There’s automation, running daily, that extracts strings from the code repository, and creates a PR exposing them to all locales.

- There’s a basic linter that checks for issues in the localized content, in particular missing variables. That happens more often than it should, mostly because the placeholder format is different from what localizers are used to, and there might be Translation Memory matches — strings already translated in the past in other products — coming from different file formats.

The update automation was particularly interesting. Extracting new en-US strings is relatively easy, thanks to Qt command line tools, although there is some work needed to clean up the resulting XLIFF (for example, moving localization comments from

The update automation was particularly interesting. Extracting new en-US strings is relatively easy, thanks to Qt command line tools, although there is some work needed to clean up the resulting XLIFF (for example, moving localization comments from extracomment to note).

In the process of adding new locales, we quickly realized that updating only the reference file (en-US) was not sufficient, because Pontoon expects each localized XLIFF to have all source messages, even if untranslated.

Historically that was the case for other bilingual file formats — files that contain both source and translation — like .po (GetText) and .lang files, but it is not necessarily true for XLIFF files. In particular, both those formats come with their own set of tools to merge new strings from a template into other locales, but that’s not available for XLIFF, which is an exchange format used across completely different tools.

At this point, i needed automation to solve two separate issues:

- Add new strings to all localized files when updating en-US.

- Catch unexpected string changes. If a string changes without a new ID, it doesn’t trigger any action in Pontoon (existing translations are kept, localizers won’t be aware of the change). So we need to make sure those are correctly managed.

This is how a string looks like in the source XLIFF file:

<file original="../src/ui/components/VPNAboutUs.qml" datatype="plaintext">

<body>

<trans-unit id="vpn.aboutUs.tos">

<source>Terms of Service</source>

</trans-unit>

</body>

</file>

These are the main steps in the update script:

- It takes the en-US XLIFF file, and uses it as a template.

- It reads each localized file, saving existing translations. These are stored in a dictionary, where the key is generated using the original attribute of the

fileelement, the string ID from thetrans-unit, and a hash of the actual source string. - Translations are then injected in the en-US template and saved, overwriting the existing localized file.

Using the en-US file as template ensures that the file includes all the strings. Using the hash of the source text as part of the ID will remove translations if the source string changed (there won’t be a translation matching the ID generated while walking through the en-US file).

Testing

How do you test a project that is not publicly available, and requires a paid subscription on top of that? Luckily, the team came up with the brilliant idea of creating a WASM online application to allow our volunteers to test their work, including parts of the UI or dialogs that wouldn’t be normally exposed in the main user interface.

Localized strings are automatically imported in the build process (the l10n repository is configured as a submodule in the code repository), and screenshots of the app are also generated as part of the automation.

Conclusions

This was a very interesting project to work on, and I consider it to be a success case, especially when it comes to cooperation between different teams. A huge thanks to Andrea, Lesley, Sebastian for being always supportive and helpful in this long process, and constantly caring about localization.

Thanks to the amazing work of our community of localizers, we were able to exceed the minimum requirements (support French and German): on launch day, Mozilla VPN Client was available in 25 languages.

Keep in mind that this was only one piece of the puzzle in terms of supporting localization of this product: there is web content localized as part of mozilla.org, parts of the authentication flow managed in a different project, payment support in Firefox Accounts, legal documents and user documentation localized by vendors, and SUMO pages.

Alexander Bukhonov wrote on