One of the missions of Pontoon since its inception has been to host critical information needed for localization under one roof. Over the course of the last few months several pieces of that puzzle have been solved.

Translation errors and warnings

Until recently, a vital piece of information was missing from Pontoon dashboards, namely translation errors and warnings. Localizers had to go hunt them on the standalone product dashboard, where they appeared 20-30 minutes after being submitted in Pontoon. If they wanted to fix an error, they had to go back to Pontoon, submit a (hopefully) legit translation, and wait another 20-30 minutes to see product dashboard go green.

As you can imagine, very few localizers actually did that. So in practice it was mostly project managers that were looking for errors, submitting suggestions with fixes and pinging localizers to approve them.

The first step towards bringing errors and warnings closer to localizers’ attention was made back in May when we started using compare-locales checks in Pontoon. These checks are split into two groups. The first consists of checks that need to be passed by translations landing in localized products (e.g. Firefox builds). Pontoon prevents translations failing those checks from even being saved. The second group contains more loose checks, which aren’t required in the build process and can be bypassed in Pontoon.

Since May we extended compare-locales support from the basic translation workbench to all places where translations can enter Pontoon, including batch actions, file upload, and import from VCS. Finally, we performed checks on all translations previously stored in Pontoon.

Since May we extended compare-locales support from the basic translation workbench to all places where translations can enter Pontoon, including batch actions, file upload, and import from VCS. Finally, we performed checks on all translations previously stored in Pontoon.

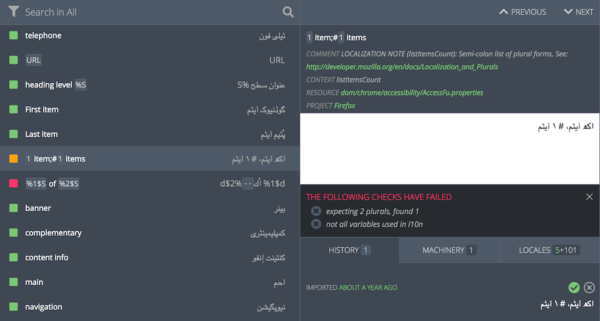

Now that we had the data about failing checks, we started integrating them into the localizers’ workflow, so they could be fixed easily. We introduced two new translation statuses: Errors for translations failing checks from the stricter group, and Warnings for translations failing checks that can be bypassed.

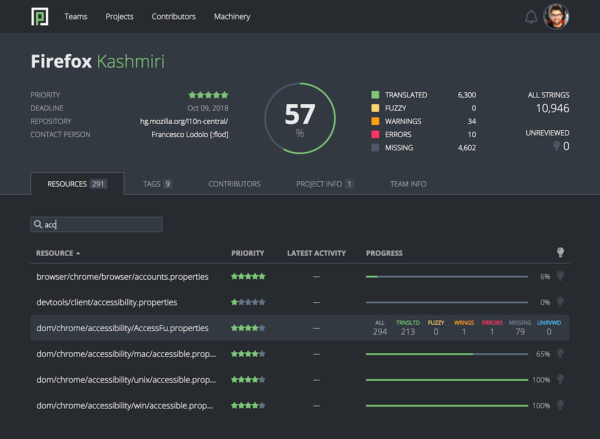

Next, we started calculating Errors & Warnings counts and revealing them on dashboards. Shortly after corresponding filters have been implemented for quick access to strings with failing checks.

Errors (red) and warnings (orange) are designated with their respective colors in the string list. The list of failing checks appears in the editor upon openning the string.

Finally, the effort spanning multiple quarters started paying off. In the 7 days since the (silent) launch, the number of errors across all localizations went down by 42%. The number of warnings (which include false positives) decreased by 10%.

A huge shout-out to jotes, the powerhouse behind landing translation errors and warnings in Pontoon! As part of this effort he not only fixed 8 bugs with a total of 3,000 lines worth of changesets, but also designed the development process we used.

Including all localizations

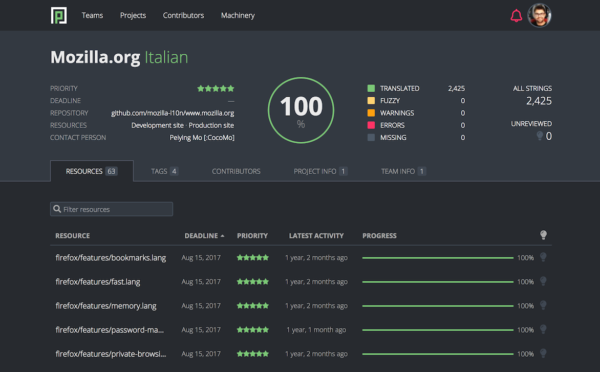

Another important piece of information that made it to Pontoon recently are localizations that don’t take place in Pontoon. We introduced the ability to enable such localizations in read-only mode, so project managers can now access full project stats in dashboards and the API. Additionally, all Mozilla translations are now accessible in the Locales tab, and Translation Memory of teams that localize some of their projects outside Pontoon now also includes those.

Inversely, we removed system projects (Tutorial and Pontoon Intro) from dashboards, which means they now contain not only all, but also only the relevant stats.

Resource deadlines and priorities

The most critical piece of information coming from the tags feature is resource priority. It used to be pretty hard to discover, because it was only available in the Tags tab, rarely used by localizers. Back in July we fixed that by exposing resource priority in the Resources tab of the Localization dashboard. Similarly we also added a resource deadline column.

As a consequnce of these two changes localizers can now see resource priorities and deadlines without leaving Pontoon for the standalone web dashboard.

The road ahead

We hope these changes will increase efficiency of the Mozilla localization community and we’re happy to see numbers already started confirming that. Which is a great motivation for the road ahead of us. There are plenty of improvements we’d still like to make to our dashboards, ranging from smaller ones like adding word count to stats to bigger efforts like tracking community activity over time.

You’re welcome to submit your own idea or start hacking on one of the good first bugs.

AboTurki Alawad wrote on

Jeff Beatty wrote on